Key Points

- Understanding Azure Blob Storage Tiers: Learn how Hot, Cool, and Archive tiers differ in cost, performance, and access frequency to align with client backup needs and SLAs.

- Define Recovery Objectives (RTO/RPO): Map workloads, access patterns, and acceptable recovery times to ensure each Azure Blob Storage tier supports business continuity.

- Apply Immutability and Retention: Protect backups from tampering and ransomware by enforcing time-based immutability and legal hold policies for compliance and audit readiness.

- Automate Lifecycle Management: Use Azure lifecycle rules to automatically move data between Blob tiers, maintaining cost efficiency and reliable recovery performance.

- Monitor Costs and Governance: Track Azure Blob Storage usage, early deletion, and retrieval fees to prevent overspending while maintaining compliance and transparency.

- Integrate with NinjaOne for Automation: Combine NinjaOne’s automation and reporting tools with custom Azure CLI or PowerShell scripts to collect metrics, alert on critical events, and deliver client-ready reports.

With multiple Azure Blob Storage tiers to choose from, it’s easy to mistakenly select an option that doesn’t reflect your retention and access needs. This can lead to unwanted overspending or accidental data loss that can impact MSP service delivery.

It’s important to know which tier fits your client’s backup goals and how to automate the switch when it changes. This guide will show you ways to balance speed, retention, and data resilience so your backups are always ready when disaster strikes.

Automate Azure Blob lifecycle management with NinjaOne.

Recommended steps to find the right Azure Blob storage tier

Azure Blob Storage is Microsoft’s cloud-based object storage service designed to handle massive amounts of unstructured data. Instead of manually managing disks or file servers, Azure Blob allows uploads into data containers within a storage account.

Azure Blob Storage’s multiple-tiered offerings make it easy to store a wide range of data, from daily backups to long-term archives. The challenge most MSPs and IT teams face is choosing which storage tier provides the best performance, protection, and price.

If you’re unsure where to start, the following steps will help you choose the right tier for your backup data.

📌Prerequisites:

- Defined RTO and RPO per backup set

- Azure subscription with a storage account for backups

- Permissions to manage containers and policies

- Backup platform that supports Azure Blob as a target

- Ability to restore from tiered data

- A workspace for monthly evidence exports and cost reviews

Step #1: Define recovery objectives and access pattern requirements

Defining recovery objectives and access patterns helps guide which Azure Blob Storage tier is best for your existing client SLAs. Without identifying these elements, you risk mismatching backups to the wrong tier, driving up costs or slowing down restores during incidents.

It’s important that technicians align backup and restore objectives with how Azure Blob handles data across its hot, cool, and archive tiers. Understanding what’s acceptable according to your current recovery objectives and access requirements ensures your choice supports your RTO and RPO promises to clients.

List workload, backup frequencies, and recovery objectives

Map out critical systems, such as file servers, SQL databases, and SaaS connectors, with their backup intervals and restore goals. This helps identify which datasets require faster access and which can tolerate delays.

Review the access frequency of recent and older restore points

This reveals the actual access patterns of backups. For example, if most restores happen within the last 30 days, those backups belong in a hot or cool tier. On the other hand, older and least-accessed restore points can safely move to the archive.

Decide the maximum acceptable retrieval delay

Each Blob tier has different access times, which may cause noticeable recovery delays. Azure Archive tier, for instance, may take several hours to rehydrate blobs. Knowing acceptable retrieval delay ensures you don’t violate SLAs or surprise clients with extended downtime.

Step #2: Map backups to the appropriate Azure Blob Storage tier

After documenting backup workloads and their recovery requirements, the next step is to match each backup dataset to the adequate tier that meets client demands. Mapping backups to the appropriate storage tier ensures that your storage strategy matches your access requirements.

Proper mapping keeps recent data recovery fast and long-term data storage cheap without compromising compliance. It also prevents backups from ending up in the wrong tier, which can cause unnecessary spending or recovery delays for clients.

Below is our recommended action plan:

- Use hot storage for frequently accessed data: Put recent backups, ongoing projects, or frequently restored data into hot storage tiers for quick recovery or rollbacks.

- Use cool for medium-term retention: Cool storage can bridge the gap between frequent access and deep archive, serving as a cost-effective choice for monthly or quarterly backups.

- Archive long-term data: Reserve archive storage for data you rarely touch, like yearly snapshots or compliance copies. Remember to store data here only when slow restores are acceptable.

💡 Tip: Avoid overengineering tier maps. Instead, start with a clear rule of thumb, then adjust as you observe client recovery behavior and cost reports.

Step #3: Add immutability and retention to Azure Blob Storage backups

Ensuring that data inside backups isn’t compromised, altered, deleted, or encrypted by attackers is crucial for any backup tiering strategy. Locking backups inside Azure Blob Storage protects them from modifications, minimizing the risk of ransomware, insider threats, or accidental deletions.

Immutability guarantees data integrity, while retention policies help maintain compliance. This step ensures that your backups are stored long enough to meet SLA requirements.

Assign retention periods per container to enable immutability

Set a retention policy per container that matches your client’s data retention policy, such as 30, 90, or 365 days. After applying, the data in that container cannot be modified or deleted until the retention period expires.

Use legal hold to extend immutability outside normal retention periods

Sometimes, data must be preserved beyond normal retention periods; for instance, during legal review or audits. Apply a legal hold to maintain backup immutability until you remove the hold, even if the retention window has passed.

Verify the immutability status of backups in containers

Confirm if immutability settings are active after setting a retention period or applying a legal hold to backups. Capture details like policy IDs, timestamps, and retention settings in your evidence documentation to prove due diligence to clients.

Step #4: Automate the lifecycle management of blobs

Azure Blob allows the automation of information lifecycle management, maximizing cost efficiency and reducing potential downtime. This also minimizes manual technician intervention; thus, saving them from hours of painstakingly organizing data between tiers.

Aside from those, automation also ensures that lifecycle management remains consistent across clients. This reduces human errors and ensures that data automatically moves across tiers while maintaining a balance between cost and readiness.

Automate data movement between Azure Blob Storage tiers

Determine the typical duration for which data remains active within an environment. For example, if restores occur within 30 days, configure Azure to move data to Cool storage after that window, and to Archive after another 90 or 180 days.

Add buffer days to avoid premature tier transitions

It’s recommended to include a buffer of a few days or weeks after your normal retention window before moving data to another storage tier. Preventing recent restore points from moving to slower tiers too early provides clients with enough time for last-minute access.

Exclude critical backups from tier transfer workflows

Some backups, such as critical application images or compliance-specific restores, should remain immediately recoverable at all times. Determine which data should be excluded from tier transfer workflows to ensure business continuity and SLA compliance.

Step #5: Document restore and retrieval workflows

Implement test restores and retrieval across different tiers to ensure Azure Blob Storage performance meets your clients’ RTO and RPO targets. Capture test metrics to gain better insights into data rehydration or recovery speed from each tier, ensuring compliance.

Through proper documentation strategies, you can ensure backup service delivery meets SLAs. Additionally, you’ll have tangible evidence to prove to auditors, clients, or internal stakeholders.

Document restoration procedures per tier and tool

Different tools and tiers have unique restoration procedures. Document how restores are initiated; for instance, via Azure Portal, CLI, or backup software integrations, and what’s required for each procedure.

This ensures that workflow duration for each tier is accounted for, including the rehydration process, expected delays, and any staging areas before restoration.

Account for rehydration workflows and target locations for Archive data

Archive data requires a rehydration phase, ranging from several hours to more than a day, depending on size and priority. Define which storage account or region the rehydrated data is sent to, and log each test or live restore in your documentation system for traceability.

Record restore timings by workload

Each test should produce a measurable outcome regarding the duration of recovery procedures. Record these results and compare them against your clients’ recovery targets. These metrics will guide your recovery plan and provide evidence during reviews that your Blob storage configuration is SLA-compliant.

Step #6: Monitor costs and guardrails of Azure Blob Storage backups

New workloads emerge, access patterns shift, or restores become more frequent as clients expand their operations. Monitoring costs and guardrails ensure storage plans remain within financially sustainable ranges while still meeting client SLAs.

This step streamlines your cost savings without compromising client data access and recoverability. Additionally, this tracks how tenants utilize your Blob tiers, helping you catch anomalies early and adjust policies before they breach SLA compliance.

💡 Tip: This step involves reviewing costs and fees. Having an end-to-end business management solution like NinjaOne PSA can be helpful as both business and IT processes are combined in a streamlined platform.

Review monthly storage and retrieval bills

Leverage Azure Cost Management or a separate professional services automation (PSA) solution to verify if your monthly spending matches your expected tier breakdown. If costs unexpectedly rise, review user workflows to identify which tenants are driving extra storage or retrieval activity.

Flag early deletion or unexpected retrievals

In Azure Blob Storage, fees are incurred when data is moved or deleted before the minimum retention period for a given tier. Similarly, frequent data rehydration and retrieval across tiers can also incur additional fees to MSPs.

Spikes in retrieval or deletion charges may signal aggressive tier changes and archiving. Adjusting your blob lifecycle management by extending retention within Hot and Cool tiers or delaying Archive transitions can help mitigate costs.

Deliver a simple cost forecast per client during QBRs

Create a projection for each client to gather insights on their expected storage spending over the next few months. Share key takeaways during QBRs, such as where costs are stable, rising, or improving. This demonstrates that you’re closely monitoring costs and maintaining efficient client backups.

Step #7: Govern access and changes across Azure Blob Storage tiers

Allowing too many technicians to have unchecked access can diminish the integrity of your Azure Blob setup. This can also lead to governance and trust issues, especially if changes across tenants go unmonitored.

Proper access governance prevents accidental edits, tracks changes, and leaves a clear audit trail, ensuring transparency and accountability. This enables your Azure Blob Storage to become a controlled, compliant, and auditable backup strategy.

Log container edits, access, and restores

Set up activity logging in Azure to capture every configuration change and access attempt. Whether it’s someone editing a lifecycle rule, accessing an immutable container, or triggering a restore, these events should be recorded automatically.

By logging these instances, you’re creating a traceable record that preserves suspicious activities during audits or investigations.

Review access by role and rotate credentials on a schedule

It’s important to ensure that only authorized users have access to sensitive storage accounts or Blob containers. Conduct quarterly permission reviews to spot and remove deprovisioned and inactive accounts. Rotate shared keys or credentials regularly to reduce the risk of misuse or insider threats that can lead to breaches.

Attach logs and policy snapshots within your evidence documentation

Collect a summary of recent policy changes, access logs, and configuration snapshots, and include them within client-facing documentation for QBRs. This ensures effective governance management and demonstrates to clients that data protection policies are in place to safeguard their data.

Integrate Azure Blob metrics with NinjaOne alerts.

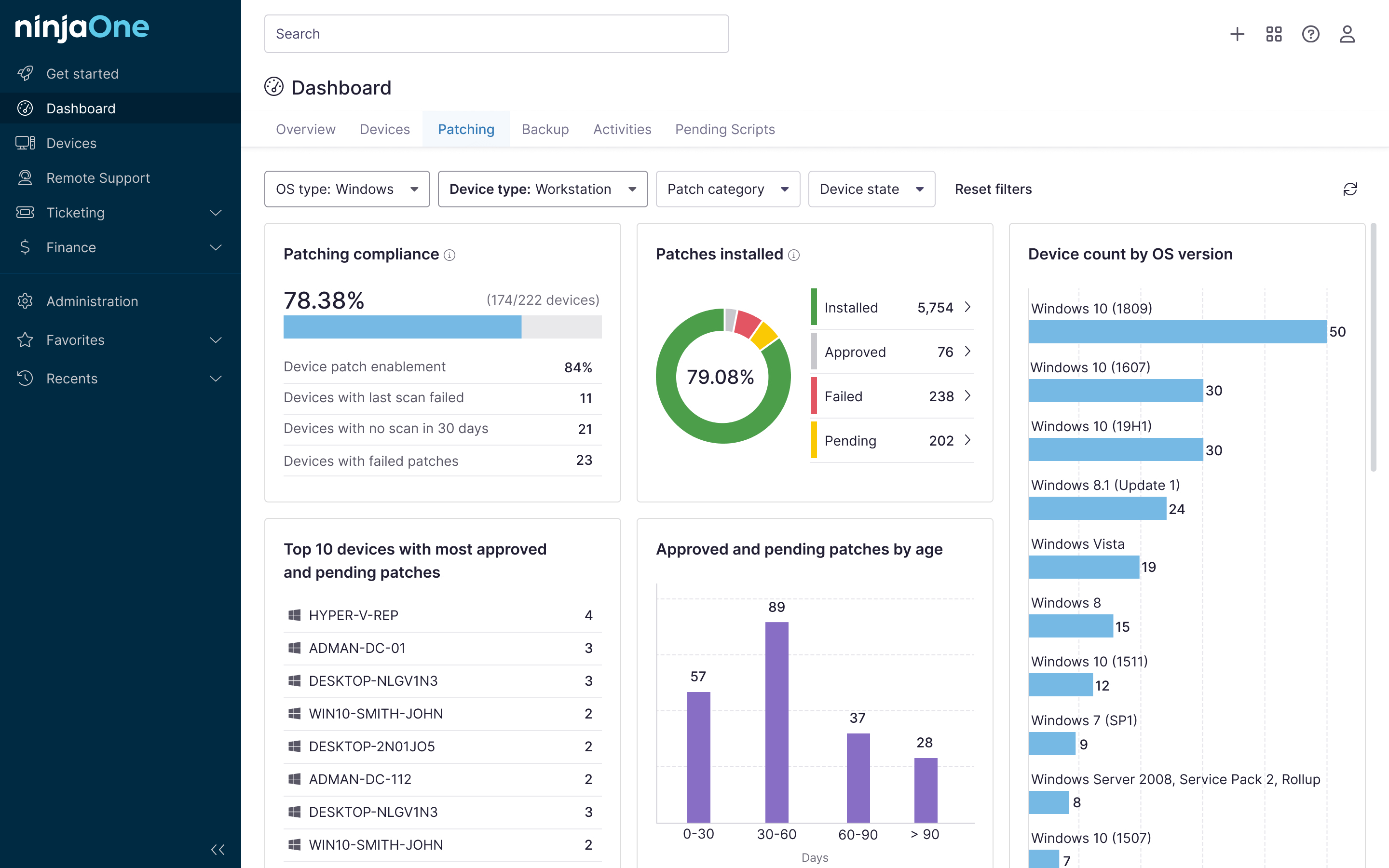

Integrating Azure Blob Monitoring with NinjaOne automation

NinjaOne serves as an orchestration layer for Azure Blob monitoring by running scripted checks, collecting evidence, and transforming results into alerts and reports. While NinjaOne offers a robust automation framework, all Azure Blob-specific data is retrieved through the use of custom scripts.

- Automated evidence collection: Create and schedule custom scripts to query Azure Blob tier information, such as cost summaries and restore test results, within pre-defined intervals.

- Monitoring and alerting: Attach notification channels to evidence collection and restore test scripts to quickly alert technicians when critical backup events occur, such as missing immutability policies.

- NinjaOne reporting tool: Collect backup script-generated metrics, like success rate, average restore time, and tier distribution per client, and transform them into client-facing reports using built-in templates.

Match Azure Blob Storage tier to client RTO and RPO

Matching your Azure Blob Storage plan with actual client RTO and RPO needs right-sizes spending to client recovery objectives. You can achieve this by ensuring immutability, automating lifecycle management, and regularly validating backup integrity. Incorporate cost and change governance to help your Azure Blob strategy meet client recovery goals without compromising auditability and budgets.

Related topics: