Key Points

- Remove ROT data before differential backups to reduce backup time, shrink datasets, lower storage consumption, and improve restore accuracy.

- Use a defined process to help teams safely quarantine or exclude low-value data, streamline differentials, and maintain RPO/RTO alignment across evolving workloads.

- Automate telemetry, efficiency reporting, ROT detection, and exception handling to ensure ongoing improvement.

- Regularly refine rules using backup analytics to prevent ROT creep, provide durable performance gains, and support audit-ready, data-driven operations.

Redundant, Obsolete, or Trivial (ROT) data refers to information that is no longer needed or relevant. This means they only inflate backup sets, slow down differentials, and waste storage space. Identifying and handling ROT before backup windows leads to shorter jobs, lower costs, and improved restore signal without risking compliance or recovery objectives.

Cutting backup time and storage costs by eliminating ROT data

You can reduce backup time and storage costs by removing ROT data before differential jobs. To do so, you’ll need to measure the baseline, define the ROT criteria, build a pre-backup ROT pipeline, tune backup scopes and cadence, validate with checks, and iterate with feedback.

📌 Prerequisites:

- Data classification and retention policy references

- Read access to file metadata and activity logs

- Change management for quarantine, exclusions, and deletes

- Backup reporting that includes job duration, size, failure items, and top paths

Method 1: Measure the baseline

Measure the baseline of how much ROT you have and how it affects your work.

📌 Use Case: A team sees nightly differentials creeping longer. A quick baseline reveals a few paths packed with stale media, duplicates, and outdated project files, driving most of the growth.

Capture key backup metrics, such as backup size per repository, job duration, differential size growth, and storage growth trends. These measurements validate the impact of the ROT cleanup. Afterward, analyze telemetry to identify the most significant contributors to ROT-related inefficiencies.

Once done, estimate ROT percentage using low-risk heuristics:

- Age: File untouched or unmodified

- Last access time: Rarely or never accessed data

- Duplication: Multiple copies of the duplicate content

- Trivial types: Low-value file extensions

Lastly, correlate ROT-heavy areas with known issues to show where ROT is inflating backup sets and degrading performance.

Method 2: Define ROT criteria with safety rails

The next step is to determine what constitutes ROT, ensuring that cleanup reduces noise without compromising business-critical data.

📌 Use Case: A team identifies large volumes of stale files but hesitates to remove them. By formalizing ROT rules, they can safely quarantine and exclude data without fear of accidental loss.

Establish ROT categories by breaking them into types to ensure remediation is precise and predictable:

- Redundant: Duplicate files or superseded content

- Obsolete: Files past business usefulness or replaced/updated

- Trivial: Low-value items like caches, temp data, thumbnails, or logs that don’t require protection

Method 3: Build a pre-backup ROT pipeline

A pre-backup pipeline clears ROT before it inflates differential sets.

📌 Use Case: A backup window continues to overrun despite hardware upgrades. By running a lightweight ROT scan before each differential job, the team can immediately reduce churn and bring backups back within the SLA.

There are three main sub-steps to follow when building a pre-backup ROT pipeline:

Scheduling ROT discovery

Run ROT classification on a cadence that aligns with backup cycles:

- Daily or nightly for high-change repositories

- Weekly for slower-changing or archival data

Stage remediation in safe phases

Implement ROT handling in steps that minimize risk:

- Phase 1: Quarantine suspicious items

- Phase 2: Approval from data owners, compliance, or operations

- Phase 3: Deletion after the defined dwell time

Maintain an exception workflow

Since some data must stay despite being ROT, provide a structured way to flag it. Include the owner, reason, and expiry date. You want to automatically re-review them after expiry and log why they’re spared from deletion.

Method 4: Tune backup scopes and cadence

Tune scopes and cadence to reduce noise and align protection efforts with data change rates.

📌 Use Case: An environment has a mix of fast-changing project shares and largely static archives, yet they’re all backed up on the same aggressive schedule. By splitting repositories and refining exclusions, the team reduces unnecessary workload and significantly shortens the backup window.

Exclude confirmed ROT and low-value paths

Once you verify ROT, remove it from backup selections:

- Exclude paths that generate trivial data

- Apply file-type exclusions for caches, temp files, thumbnails, and logs

- Preserve custom allow-lists to avoid excluding critical app data

Group repositories by change rates

Match backup cadence to how quickly data changes:

- High-change sets: daily differentials or continuous protection

- Moderate-change sets: every two to three days

- Low-change or archival sets: weekly or monthly cycles

Apply app-aware exclusions

Some folders are noisy but provide no restore value.

- Application caches

- Temp and staging directories

- Build logs and system-generated clutter

Method 5: Validate with restore-focused checks

This step ensures your exclusions and cadence changes don’t compromise recovery objectives.

📌 Use Case: After excluding several ROT-heavy paths, a team wants to confirm that nothing essential was lost. Running quick restore drills on priority datasets shows that backups still meet the RPO/RTO requirements.

Run targeted restore drills on important datasets after each ROT cleanup cycle. These tests confirm that ROT removal and cadence adjustments haven’t disrupted the integrity or availability of business-critical files.

Verify that RPO and RTO targets are met. Deleted ROT often results in faster restore times; however, the priority is to confirm that data recency and recovery speed remain aligned with business requirements.

If a validation step reveals that an exclusion harmed restore utility, roll back the change. Re-enable affected paths or file types, adjust the corresponding ROT rules, and document the correction. Fast remediation preserves trust in the cleanup process and ensures future cycles are aggressive on ROT and safe for recovery.

Method 6: Iterate with feedback

Feeding real backup telemetry back into your ROT rules ensures efficiency.

📌 Use Case: A team notices that specific exclusions stop being effective as data patterns shift. By reviewing backup reports each cycle and adjusting rules accordingly, they prevent ROT from creeping back into backup scopes.

Continuous improvement relies on regularly analyzing backup telemetry and using that information to refine ROT rules. Adjust quarantine dwell times, exclusion patterns, and review cadences based on the findings of the data.

This ensures the cleanup process is aligned with evolving workloads. As policies mature, they expand coverage to new repositories and applications, enabling ROT management to scale.

Best practices when cutting backup time and storage costs

The following table summarizes the best practices to follow when reducing backup time and storage costs by eliminating ROT data.

Practice | Purpose | Value delivered |

| Baseline measurement | Set targets and prove results | Credible time and storage savings |

| Safety-gated criteria | Prevent accidental loss | Compliance and business trust |

| Pre-backup pipeline | Shrink sets before they form | Shorter differentials, fewer retries |

| Scoped exclusions | Reduce noise, keep signal | Faster restores with relevant data |

| Restore drills | Guard outcomes | Confident RPO/RTO adherence |

| Evidence packets | Transparency | Audit-ready improvements |

| Continuous feedback | Durable gains | Savings that persist over time |

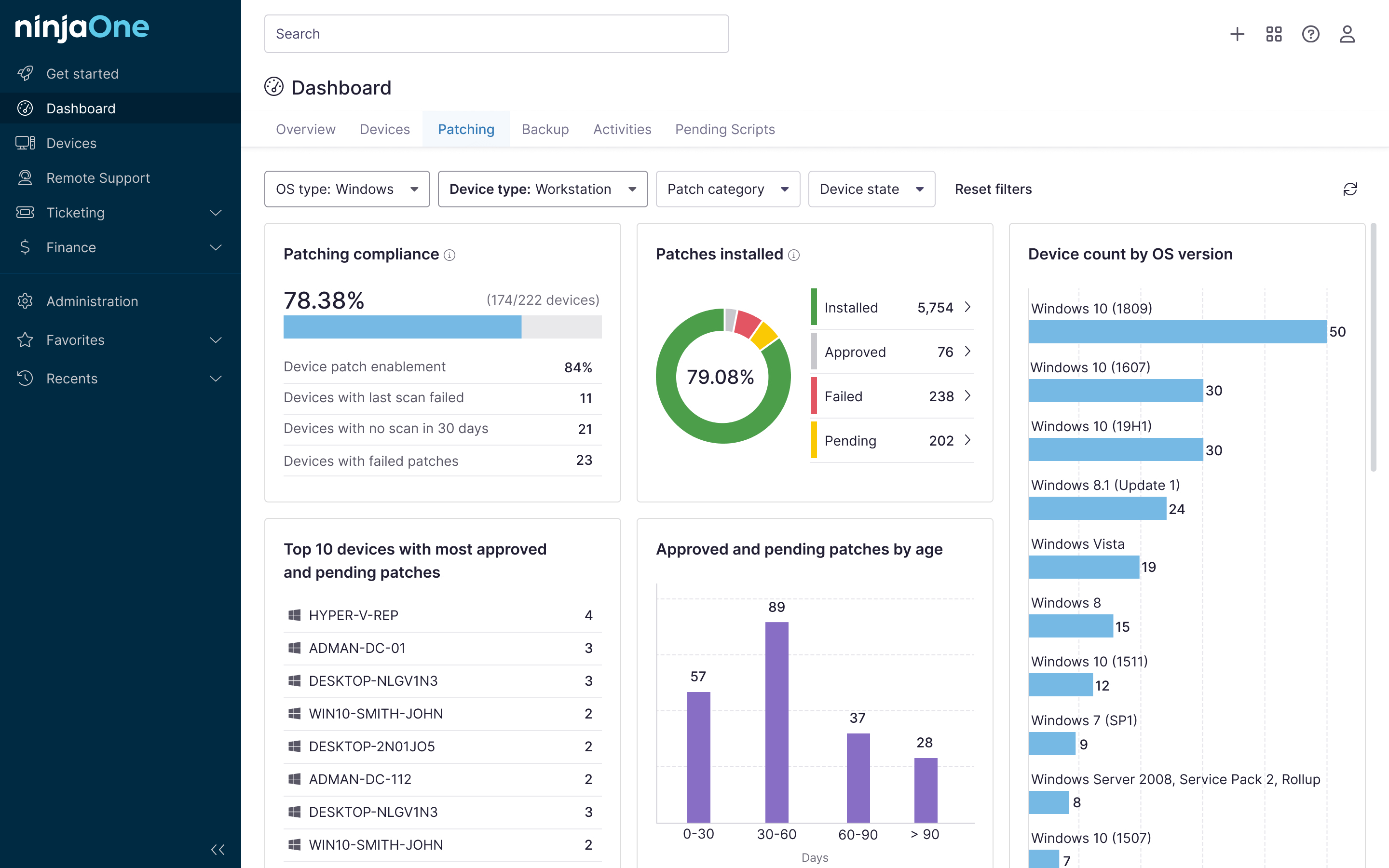

NinjaOne services that help cut backup time and storage costs

You can use NinjaOne’s inventory and file-change telemetry to target high-ROT shares, schedule pre-backup scripts for quarantine and exclusions, and attach efficiency packets to monthly client reviews. Additionally, you can track exceptions with owners and expiries, and trigger restore spot checks.

Quick-Start Guide

NinjaOne can help cut backup time and storage costs by eliminating ROT (Redundant, Obsolete, Trivial) data through several mechanisms:

- Synthetic Backup Chains: NinjaOne’s synthetic backup approach creates full backups from incremental data, reducing the need for multiple full backups and saving storage space.

- Deduplication: NinjaOne automatically identifies and removes duplicate data across backups, significantly reducing storage requirements.

- Azure Blob Storage Tiering: By selecting the appropriate Azure Blob Storage tier (Cool or Archive), businesses can match recovery objectives with cost-effective storage options.

- Backup Optimization: NinjaOne provides tools to optimize backup schedules and retention policies, ensuring businesses only store necessary data.

- Cloud Integration: Seamless integration with cloud storage solutions helps businesses leverage scalable and cost-efficient storage options.

These features collectively help businesses reduce both backup time and storage costs by intelligently managing data and eliminating unnecessary redundancy.

Remove ROT data ahead of differential backups

Eliminating ROT before differential backups reduces backup time, storage costs, and restore noise without sacrificing compliance. Teams can be more efficient with measured baselines, safety-gated criteria, scoped exclusions, and restore validations, as well as demonstrate them each cycle.

Related topics: