Key Points

- TCP window size directly limits throughput on high-latency networks, even when sufficient bandwidth is available.

- TCP window scaling removes early protocol limitations and is essential for efficient performance on modern WAN and cloud links.

- Comparing throughput, round-trip time, and effective window size helps identify protocol-driven performance issues and avoid misdiagnosis.

Various system and network issues can cause slow file transfers. One that may be overlooked is the Transmission Control Protocol (TCP) window size, which directly limits throughput on high-latency links. This guide explains how TCP window size works and why it affects network performance.

Understanding TCP window size

TCP is a transport protocol that ensures reliable and secure delivery of data between systems over an IP network. As the exchange happens in real time, the recipient must acknowledge the data, which is sent partially in windows (or segments).

The window size accounts for how much data can be transmitted over the network and is negotiated during the TCP handshake. A fast sender may overwhelm a slow recipient, which leads to data errors and inconsistencies. The window size helps set the pace of data transfer based on the receiver’s capacity.

With that said, waiting for confirmation takes time in long or high-latency connections. If the window is too small, the sender sits idle even though the network could carry more data. That is why file transfers can be slow even when the bandwidth looks fine.

How TCP window size limits throughput

TCP window size is a safety valve that keeps data transfers stable. However, it may inadvertently limit throughput when parameters are too restrictive.

For instance, high bandwidth does not guarantee high throughput due to latency, packet loss, or TCP window size. The latter of which is typically overlooked during performance troubleshooting.

Ideally, the TCP window size should be set proportionately so that data transfers proceed smoothly without frequent pauses.

Why TCP window scaling exists

Early TCP implementations capped the TCP window size to about 64 KB due to a 16-bit window field. This restriction worked on low-latency networks but became a bottleneck as bandwidth and latency improved.

TCP window scaling extends the effective window size by allowing systems to set much larger values. This enables efficient data transfer on high-bandwidth, high-latency networks, including modern WAN and cloud environments.

How window size interacts with other TCP mechanisms

TCP window size works alongside other TCP mechanisms to determine actual data transfer performance. Packet loss can reduce the effective window, while congestion control adjusts sending behavior in response to network conditions.

In addition, some firewalls and middleboxes may interfere with window scaling, limiting throughput unexpectedly. Modern operating systems also dynamically tune window size, meaning real-world performance reflects the combined effect of these mechanisms rather than window size alone.

Diagnosing window size performance issues

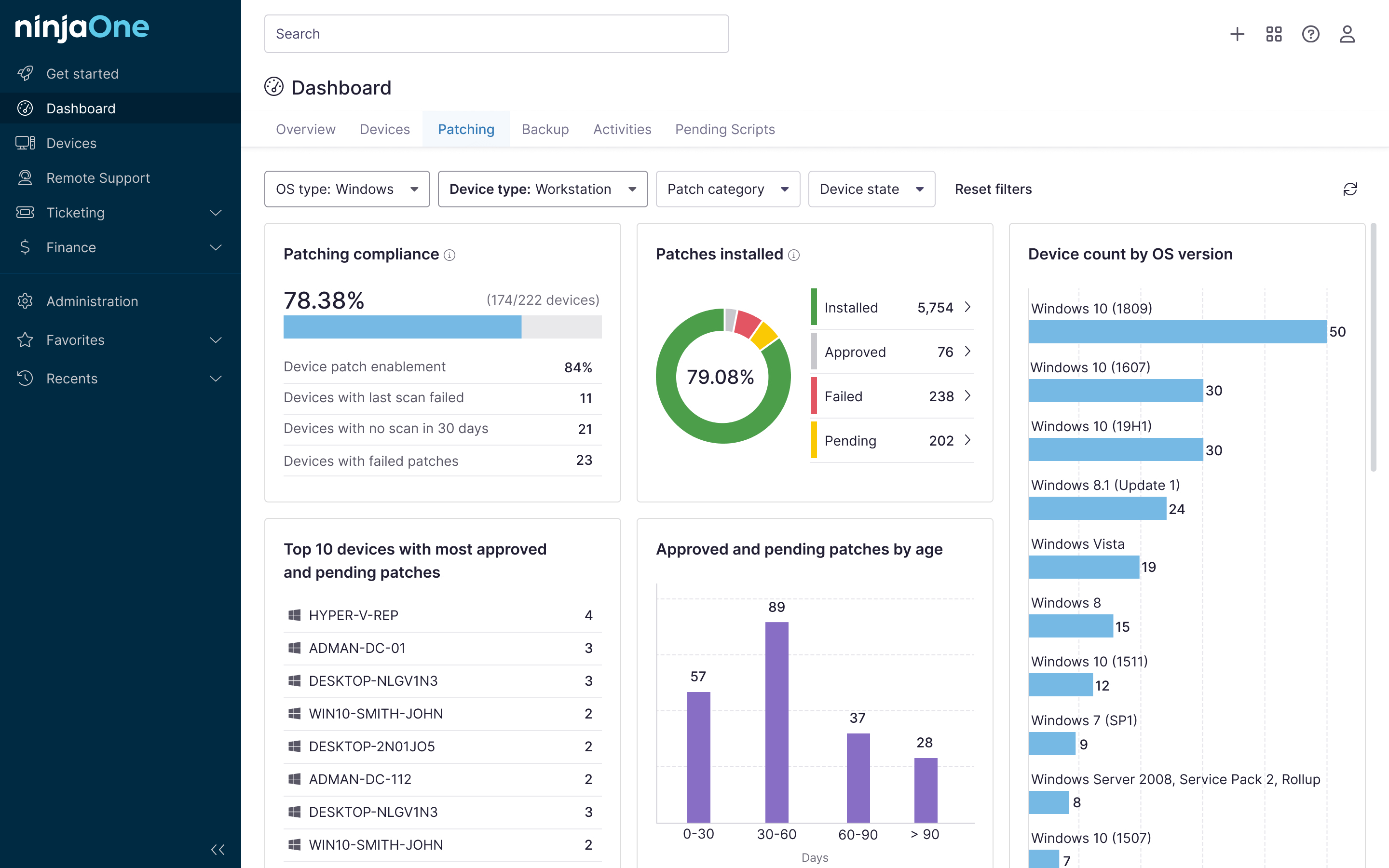

At a glance, this table provides typical scenarios where slow file transfers are caused by TCP window size limitations or by other common network issues.

| Type of network issue | Typical symptoms | Likely reason |

| TCP window size limitation | Low throughput on high-bandwidth links, smooth but capped transfers, and low link utilization | Window size too small to offset round-trip time |

| High latency | Slow transfers that improve only slightly with more bandwidth, and noticeable delays on long-distance connections | Long round-trip time between sender and receiver |

| Packet loss | Inconsistent speeds, frequent slowdowns, retransmissions, stalled transfers | Dropped packets triggering TCP retransmission and congestion control |

To confirm if TCP window size is causing issues, review round-trip time alongside observed throughput and compare it to the effective window size. Look for low link utilization during active transfers without packet loss and verify that scaling is enabled and not being blocked by firewalls or middleboxes.

Automate network performance monitoring

Automating network performance monitoring enables teams to quickly identify patterns such as throughput ceilings, latency spikes, and low link utilization that are easy to miss in one-off tests.

This approach helps teams avoid unnecessary bandwidth upgrades and prevents performance issues from being misattributed to network infrastructure when the limitation is protocol-driven.

Unified IT management tools like NinjaOne support autonomous endpoint management and network monitoring, helping teams correlate endpoint behavior with network performance to identify network and system-related issues with urgency and accuracy.

Related topics: