Key Points

- Classify applications before containerization to manage stateless, stateful, and legacy workloads correctly.

- Plan persistent storage and data protection to meet defined RPO and RTO needs.

- Standardize orchestration to ensure consistent configuration and deployment across environments.

- Build portability into pipelines using image signing, SBOM checks, and centralized registries.

- Enforce resource limits and network policies as code for consistent hybrid cloud governance.

- Implement unified monitoring and alerting to maintain visibility across clouds.

- Validate disaster recovery with routine snapshot and restore testing.

Hybrid cloud deployments for container-based apps and services present unique challenges for the IT administrators and managed service providers (MSPs) who deploy, maintain, and manage them. Mixing private on-premises infrastructure and public cloud resources can lead to observability, security, and protection gaps, where containers may go unmonitored, use unvetted dependencies, or fail to be covered by disaster recovery.

This guide explains how to run containers in a hybrid cloud environment while maintaining these critical measures consistently.

Step 1: Classify apps before you containerize them

Treat data separately from compute resources. Before containerizing apps, take a full inventory, and group them by whether they are stateless, stateful (i.e, with attached storage), or those that cannot be containerized due to hardware or software requirements. Document each group, including summarizing service level objectives (SLOs) and any constraints.

Step 2: Plan persistence and protection

Define the storage classes for stateful containers and align snapshots and backups with recovery point objective (RPO) and recovery time objective (RTO) requirements.

Step 3: Orchestrate consistently

Standardizing on Kubernetes, Docker Swarm, or another orchestration platform or managed container service will reduce the number of configurations you need to maintain and help ensure consistency. This will assist in creating reliable deployment configurations, enable scaling, as well as streamlining ongoing tasks like health checks and rolling updates, helping to maintain oversight.

These can be stored as Kubernetes manifests and Helm charts under version control for repeatable deployments and allow these configurations to evolve with your applications while remaining compatible across cloud and on-premises environments.

Step 4: Build portability and policy into your pipelines

Use a single source of truth: a centralized container image registry that handles image signing, software bill of materials (SBOMs), and policy checks. Each app should have a single image and promote it across environments with tagging to prevent them from drifting towards working in only a single cloud environment.

Enforce resource limits and network policies as code to ensure that these are also portable and consistent across environments.

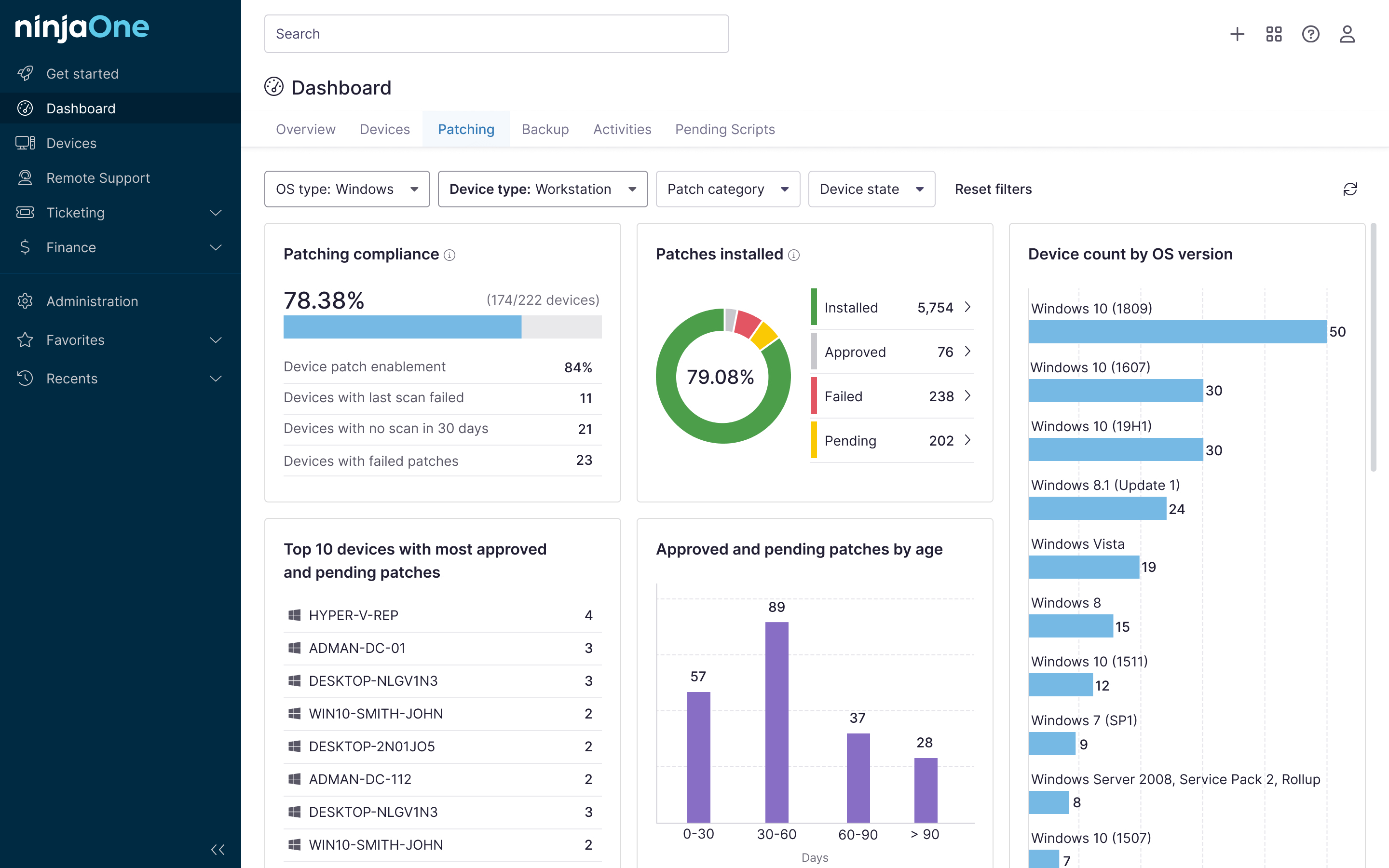

Step 5: Operations and monitoring parity

Create cross-cloud dashboards and implement alert notifications that cover key container metrics such as pod health, restart counts, saturation, and latency. Set alert thresholds that match your availability requirements to avoid blind spots during failover.

Step 6: Cost and capacity controls

Prevent runaway costs by carefully crafting autoscaling rules that match your uptime, capacity, and budget requirements. Implement the native cost-tracking tools available in cloud platforms, and optionally pull data into your own reporting tools for automated, regular cost breakdowns.

Step 7: Prove compliance and readiness with evidence

Validate restoring from snapshots in both on-premises and cloud environments. Record timings and outcomes (including capacity, cost, and risk) in your documentation platform for later review.

NinjaOne gives you the tools for full hybrid cloud observability and automation

The success of hybrid cloud projects depends on portability and consistency across environments, as well as being able to maintain oversight over operations. NinjaOne provides a comprehensive IT management toolset that extends from private infrastructure to the public cloud, ensuring coverage of all endpoints and workloads, whether physical or running in virtual machines or ephemeral containers.

With NinjaOne automation, you can script the scanning and signing of images, deployment to staging clusters (local or cloud), run restore tests, and generate reports with timings and policy audits for later review.