Key Points

- MTTR is an Average Restoration Metric: MTTR is the average time to restore service/functionality after an incident, based on defined start/end points.

- Define MTTR Meanings: MTTR is interpreted as repair/restore/recover/resolve, so teams must state what “start” and “end” timestamps represent to avoid misleading comparisons.

- MTTR Calculation is Simple but Definition-dependent: MTTR is total repair time divided by the number of repairs/incidents; inconsistent incident boundaries create noise.

- Track Distributions, Not Only the Mean: Median and percentiles (p75/p90) reduce distortion from outliers and make trend analysis more reliable than just averages.

- MTTR Differs from Reliability and Detection Metrics: MTBF measures time between failures, MTTD measures detection speed, and some teams use MTTR as “resolve,” which changes what the metric captures.

Mean Time to Repair (MTTR) is a key metric that measures the average time to fix a system or component. It is one of the most commonly cited metrics in IT ops. But understanding what the scores truly represent enables your technicians to continuously improve instead of resorting to superficial (and often costly) solutions.

Mean Time To Repair reflects operational efficiency

To understand Mean Time To Repair, we have to look at its purpose, how it’s calculated, and the unique insights it can offer.

What MTTR measures

Atlassian defines MTTR as the average time to recover from failure, measuring the time between the outage and full operational functionality. Simply put, it is the time your troubleshooting team needed to get a system or tool back up and running again.

The MTTR metric measures the time between failure or “incident start” and fully restored system performance. This makes it a valuable tool in trend tracking and comparing before/after tool performance reviews, especially when you monitor the median and percentiles (p75/p90) alongside the mean.

How MTTR is calculated

MTTR is a simple average of incident durations. IBM provides the standard formula for MTTR as:

MTTR = total repair time ÷ number of repairs

To measure MTTR accurately, you need to track the time to detect, diagnose, and repair. Define start/end consistently in your metrics, or your MTTR will run the risk of adding noise to your reports.

Here’s how you can calculate MTTR via Excel:

- Export incident records from your ITSM/RMM (for example, incident ID, start timestamp, restore timestamp, or resolved timestamp).

- Open Microsoft Excel.

- Open the Data tab and click From Text/CSV > your export file > Load.

- Add a column (for example, DurationMinutes).

- Use this formula for tracking durations:

=(EndTime-StartTime)*1440

- Use this formula to compute MTTR:

=AVERAGE(DurationMinutesRange)

- Document your definition in the sheet (top row note):

- “Start = failure time” or “Start = detection time”

- “End = service restored” or “End = incident resolved”

MTTR versus related metrics

Different IT ops metrics measure special aspects of operational reliability. It’s vital to distinguish MTTR from these to prepare seamless business reviews and avoid confusion.

Here are the most common distinctions you need to know:

- Mean-Time-Between-Failures (MTBF): The average time between one system failure and the next.

- Mean-Time-To-Detect (MTTD): The average time it takes to identify that a failure, security breach, or incident has occurred.

- Mean-Time-To-Resolve: Some organizations use this definition for mean time to repair while adding preventative measures to avoid repeat cases.

Common MTTR pitfalls

Google’s Site Reliability and Engineering (SRE) platform warns of simplistic “MTTx” statistics and how they can mislead rather than guide. Careful analysis and data quality factors heavily into calculating the mean time to repair correctly.

Here are the most common pitfalls in MTTR IT operations:

- Excluding detection or diagnosis time from measurements

- Closing incidents before full service restoration

- Focusing on symptoms instead of underlying causes

- Comparing MTTR across unrelated systems or environments

Using MTTR as a maturity indicator

When interpreted correctly, Mean Time to Repair can highlight areas for operational improvement. These become useful in evaluating hardened systems and tracking trends, indicating maturity.

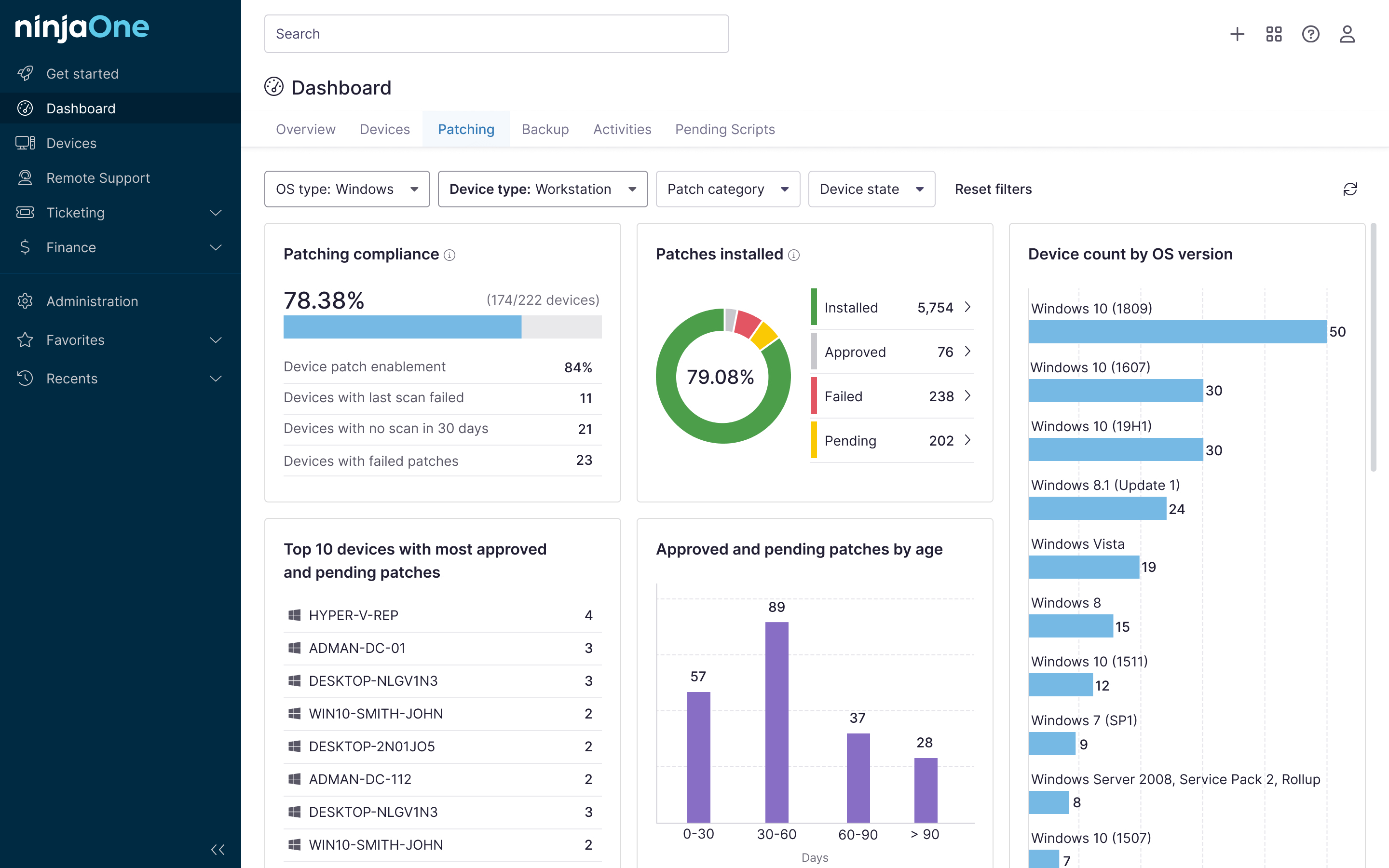

🥷🏻| Centralizing the tools your organization uses to detect and record security breaches and system failures simplifies and enhances visibility.

Read how NinjaOne’s features help uphold operational efficiency.

Important considerations

Recovery speed alone doesn’t define reliability. A system that is recovered quickly but fails frequently can result in a poor customer experience and more overhead down the line. This is why DevOps Research and Assessment (DORA) emphasizes interpreting MTTR alongside other metrics instead of a “standalone score”.

It’s also important to note where the incident took place, as a low MTTR on an ancillary tool weighs less than a quick remediation time on high-risk systems. With Mean Time to Repair, framing matters. So always provide context to avoid misleading conclusions.

On top of that, it’s important to keep a set of metrics rather than just MTTR for well-rounded reports, outcome-first approaches, and careful interpretations.

Common issues to evaluate

MTTR improves, but incidents persist

There can be cases when your Mean Time to Repair stays small, but your incident volume continues to rise. For more long-term troubleshooting goals, review the root causes of each issue and system failure before handing out permanent fixes.

MTTR varies widely

If your MTTR chart moves erratically, it may be due to inconsistent scoping, mixed severities, bad definitions, or all four. Segment your efforts to tackle these issues (such as, separate teams for different severities) for consistent categorization and better troubleshooting outcomes.

MTTR appears unrealistically low

If your MTTR looks too good to be true, it’s most likely due to premature closures. In other words, if you label a case as “resolved” too soon, it will skew your Mean Time to Repair considerably. So, avoid rushing to define and collect your measurements in each scenario carefully.

Teams resist MTTR tracking

Culturally, teams will only reject metrics when they’re used to embarrass or minimize their efforts. Create clearer definitions, and interpret them alongside other indicators (like impact, recurrence, and failure rate) to support long-term decision-making.

NinjaOne integration enhances visibility at scale

NinjaOne’s unified endpoint manager gives you more visibility into alerts, incidents, and recovery events that impact your metrics. Understanding MTTR helps teams interpret this data correctly and focus improvement efforts on detection, response, and recovery workflows that matter most.

MTTR is a major aspect of your IT operations metrics

Mean Time to Repair refers to the average time a system error or failure is remediated, serving as a tool to gauge troubleshooting prowess over time. Mature IT teams that use this measurement in conjunction with other metrics to refine workflows continuously will find the most success.

Related topics: