Key Points

- Latency measures how long data packets take to travel across a network and affects overall responsiveness, particularly in interactive and real-time applications.

- Jitter measures variation in packet arrival times, and even low-latency networks can perform poorly when packet delivery is inconsistent.

- Latency and jitter describe different network behaviors, and treating them as the same metric leads to incorrect diagnosis and ineffective remediation.

- Applications respond differently to latency and jitter, with real-time voice and video being highly sensitive to jitter, while file transfers are more impacted by packet loss and can also experience reduced throughput under high-latency conditions.

- Speed tests and bandwidth measurements do not reveal jitter, making dedicated latency and jitter monitoring essential for accurate network troubleshooting.

Often, when we experience network performance problems, they’re described in vague terms or popular catch-all phrases such as lag or slowness. However, it’s essential to understand that these are just symptoms of underlying network connection issues measured by specific metrics. Two of the most commonly misunderstood metrics are latency and jitter.

While latency and jitter both influence network behavior, they measure different aspects of it. This guide explains jitter vs latency, the differences between the two, how they interact, and how you can diagnose performance issues correctly.

Understand what latency measures

Latency is the time it takes for a data packet to travel from one point to another and back across a network. It’s often perceived as “lag,” where there is a noticeable delay between an action and the system’s response.

For example, when you click a link, latency affects how quickly the request reaches the server and how long it takes for the response to return to your device. This delay is influenced by multiple factors, including:

- Transmission delay: the time needed to push bits onto the wire

- Propagation delay: the physical travel time across the medium

- Processing delay: the time spent handling packets at network devices

- Queuing delay: the wait time caused by congestion at network devices

You can put it this way: the higher the latency, the greater the delay between action and response, especially in real-time or interactive applications.

Understand what jitter measures

Jitter measures the variation in packet arrival times. While latency describes how long packets take to travel across the network, jitter explains how consistent that travel time is.

For example, during a voice call, audio becomes choppy when packets arrive unpredictably. One packet may arrive after 20 ms, the next after 50 ms, then another after 25 ms, triggering buffering or packet loss. Even when the average latency is low, inconsistent packet delivery can cause:

- Choppy or robotic audio

- Video artifacts and visual stutter

- Frame drops and payback instability

This is why networks can sometimes feel fast yet still deliver a poor media experience.

Recognize how jitter and latency interact

Latency and jitter can be confusing because they both influence overall network behavior, but it’s important to keep in mind that they measure different things. Latency captures how long packets take to travel. Jitter captures how consistently they arrive.

Latency and jitter often influence each other, but aren’t interchangeable. For example:

- A high-latency link can still have low jitter.

If every packet consistently takes 150ms, the experience is delayed but stable.

- A low-latency network can still show high jitter under load.

If packets fluctuate between 10 ms and 80 ms, the connection feels unpredictable even if the average latency is low.

⚠️ Warning: Treating latency and jitter as the same metric can result in incorrect conclusions and ineffective remediation.

Identify application sensitivity

Applications don’t treat network conditions the same way. Some can absorb delays without issue, while others break on unstable packet timing. Having this understanding can help you identify which metric is causing performance issues.

How different applications react to latency and jitter

Web browsing

Web pages generally tolerate both latency and jitter due to built-in buffering and retry mechanisms. This is because content is delivered in short, non-continuous bursts during web browsing.

File transfers

File transfers tolerate latency because total throughput matters more than immediate responsiveness. What they don’t tolerate is packet loss, as every lost packet forces retransmissions and significantly slows the transfer.

Voice and video applications

Voice and video applications can tolerate some latency, but they are highly sensitive to jitter. This is because inconsistent packet arrival disrupts real-time playback.

Avoid common diagnostic mistakes

Many performance issues persist because the wrong metric is blamed. Now that you have a good understanding of latency and jitter, treating them separately instead of interchangeably helps you arrive at an accurate diagnosis.

Common diagnostic errors include:

- Blaming bandwidth when the issue is caused by jitter or inconsistent packet delivery.

- Adding capacity when latency is caused by physical distance or routing constraints.

- Applying Quality of Service (QoS) without first identifying the affected metric, whether it’s latency, jitter, or packet loss.

Additional considerations

Here are additional factors that provide more context when you’re evaluating performance and help prevent oversights during diagnosis.

Congestion drives jitter instability

Jitter often increases during periods of congestion and buffer saturation. When packets compete for limited resources, queuing delays become inconsistent. This leads to uneven packet delivery even when the average latency remains unchanged.

Latency grows with distance and routing complexity

Naturally, latency increases as packets travel farther. Physical distance or suboptimal routing paths introduce unavoidable delays that can’t be resolved through bandwidth increases.

Packet loss amplifies jitter effects

Packet loss can amplify the effects of jitter. Lost packets force recovery mechanisms, delay compensation, or retransmissions, all of which increase timing irregularities.

Monitoring tools must report both metrics clearly

Monitoring tools must report latency and jitter as separate metrics rather than blending them into a single connection quality score. This supports accurate diagnosis.

Troubleshooting

Here are the most common user-reported issues that point directly to the affected network metric and how you can diagnose and resolve them correctly.

Users report choppy calls

When users report choppy audio or unstable video, investigate jitter first. Inconsistent packet delivery is the most common cause of real-time media disruption.

Delayed responses

When actions feel slow or responses lag, measure round-trip latency. Persistent delay between input and feedback typically indicates excessive network latency rather than instability.

Inconsistent performance

If performance fluctuates throughout the day, look for jitter spikes. Variability under load often signals congestion or queueing issues.

Good speed test results, but poor calls

Strong throughput results do not rule out performance problems. Most speed tests primarily measure bandwidth, may not clearly expose jitter metrics. Good speeds can coexist with unstable packet delivery.

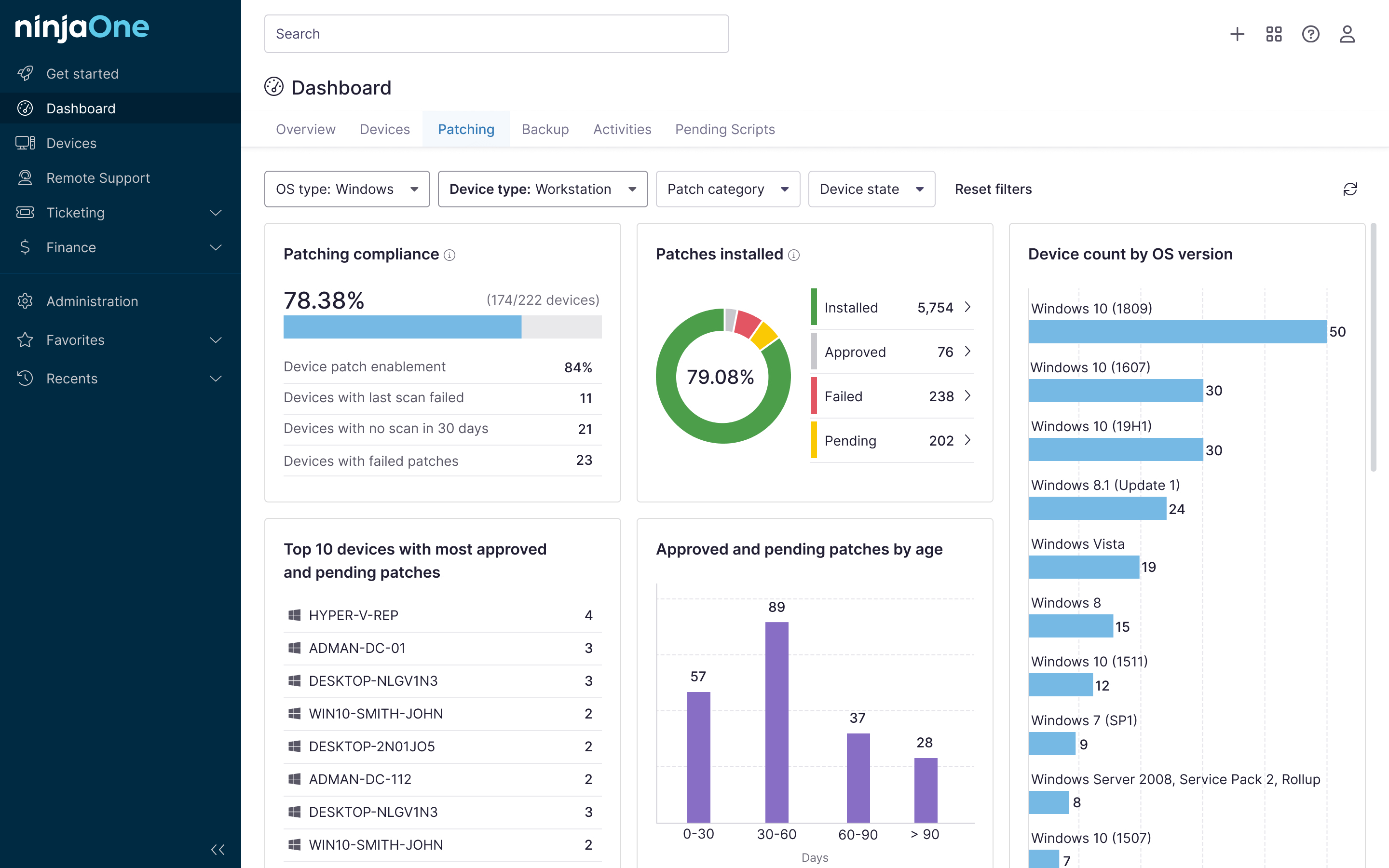

NinjaOne integration

NinjaOne helps teams monitor endpoint and network performance through its Network Management Service (NMS). Here’s how it supports this approach:

| NinjaOne capability | How it helps |

| Network Management Service (NMS) | Provides centralized visibility into endpoint and network performance, helping teams identify whether issues originate from devices, infrastructure, or the network path. |

| Device-level monitoring | Identifies endpoint-related performance bottlenecks that may appear similar to latency or jitter issues, reducing misdiagnosis. |

| Network traffic monitoring | Monitors network device interfaces and bandwidth utilization, providing visibility into network traffic levels that may contribute to congestion and jitter. |

| Centralized dashboard | Simplifies troubleshooting by bringing performance data, alerts, and device health indicators into one place. |

Jitter vs latency in real-world network performance

Jitter and latency have expanded our technical vocabulary beyond vague terms like “lag” or “choppy.” Through this guide, we’ve established that jitter and latency describe different performance characteristics, and confusing them can result in ineffective troubleshooting.

Having a clear understanding of both metrics allows for faster and more accurate diagnosis of the network issues you’re experiencing.

Related topics: