Key Points

- Change-induced downtime occurs when routine IT changes trigger unplanned service disruptions.

- Most outages result from hidden dependencies, configuration drift, or human error.

- Reduce production risk via structured change management and staged testing.

- Limit downtime duration through monitoring, alerting, and rollback plans.

- Strengthen change control via governance and cross-team communication.

- Downtime risk cannot be eliminated, but resilience reduces impact.

Organizations evolve over time. While growth is good, the changes happening within their apps, infrastructure, and configurations can become sources of operational risk. Change-induced downtime can happen when routine IT activities like deployments, upgrades, or configuration adjustments introduce unexpected disruptions, affecting system availability or performance.

These are often unplanned, so they can have immediate consequences. Read on to learn why everyday changes often lead to outages and how proper change management practices can reduce risk.

What downtime is

Downtime is any period when a system or service is unavailable or not performing correctly. This can occur either as a planned activity or an unexpected disruption during normal operations.

Downtime, especially the unplanned kind, can affect organizations in many ways, such as:

- Temporary or complete loss of system availability

- Reduced employee productivity and stalled workflows

- Negative impact on customer experience and trust

- Business interruption, even from brief outages

Why changes cause downtime

Instability can stem from routine IT changes that alter systems in unpredictable ways, especially in complex or evolving environments.

Some common reasons for changes leading to outages include:

- Hidden system dependencies that were not tested completely

- Differences between test and production environments

- Human errors during manual configuration or execution

- Compatibility issues that show only after systems are live

These factors make change-related activity one of the leading causes of unplanned downtime in IT operations.

Planning and testing to reduce downtime risk

Careful preparation before introducing a change can help teams avoid unexpected service disruptions by giving them time to identify issues early on.

Here are a few planning and testing practices that lower risk:

- Clearly defined change documentation and execution steps

- Testing in non-production environments before release

- Tracking changes through versioning and audit records

- Evaluating potential impact and failure scenarios in advance

Early planning and testing improve confidence that changes can be introduced without negatively affecting production systems.

Monitoring, rollback, and rapid response

Planning should also include ensuring teams have the ability to observe system behavior and respond to issues quickly. This should help them minimize disruptions.

The following capabilities should help contain downtime after deployment:

- Real-time monitoring to detect issues as they emerge

- Alerting mechanisms that highlight abnormal performance patterns

- Preplanned and tested rollback options to reverse changes safely and quickly

These measures reduce how long and how severe downtime persists when problems occur.

Governance and communication

Make sure to focus on structured governance to ensure changes are introduced in a controlled way. Additionally, clear communication is crucial to help everyone in the organization understand the potential effects of said changes.

Some core elements of effective change governance include:

- Scheduled change windows and formal review processes

- Communication plans with affected teams and stakeholders

- Defined ownership for impact evaluation and rollback decisions

- Alignment between IT, security, and business functions

Strong governance reduces surprises while improving coordination. This helps teams respond more effectively when issues arise.

Operational best practices

To further minimize the risk of downtime, IT teams should adopt consistent operational habits. Following some change management best practices should help introduce change in a controlled manner and reduce variability.

See these practical approaches that support reliable change execution:

- Automation of low-risk routine deployment tasks

- Gradual rollout strategies to validate changes with limited exposure

- Post-change and incident reviews to capture lessons learned

Over time, disciplined operations transform the usually unstable change into a predictable process.

Limitations and scope considerations

No organization can fully avoid downtime, especially in complex and interconnected IT environments, even with strong change management practices.

Organizations must account for these points:

- The impossibility of eliminating all risk in large systems

- Unexpected interactions between components or environments

- Service disruptions originating from external or third-party providers

Acknowledging these limitations and designing for resilience can help teams create downtime prevention strategies that reduce the overall impact when it does occur.

Common misconceptions

It’s important to clear up some misconceptions about downtime that can lead organizations to underestimate risk or place responsibility in the wrong areas.

Downtime only happens with major changes

Small updates, patches, or configuration adjustments can still affect systems and trigger outages, especially when dependencies are overlooked.

Automation eliminates downtime risk

At best, automation reduces manual errors and improves consistency. However, poorly designed or insufficiently tested automation can still introduce failures.

Downtime is IT’s problem only

While IT teams manage systems, downtime impacts the entire organization. Therefore, business continuity and response planning are shared responsibilities across technical and non-technical teams.

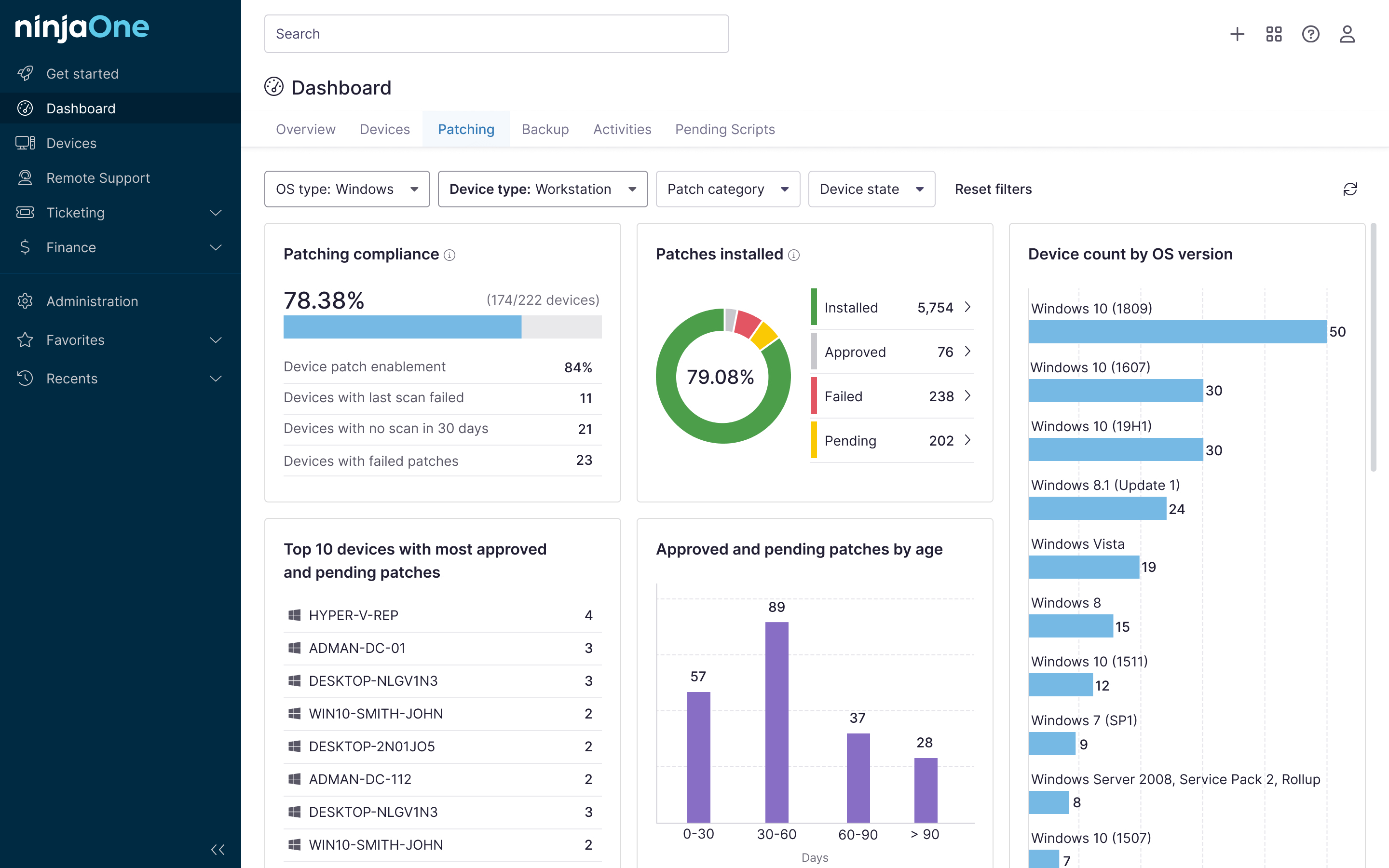

NinjaOne integration (optional)

To ensure effective change management, teams need clear visibility and the ability to quickly detect and understand issues when they occur. Here, NinjaOne can offer help:

| NinjaOne capability | How it helps reduce change-induced downtime |

| Deployment and change visibility | Allows teams to see what systems were modified, when changes occurred, and how those changes align with emerging issues |

| Performance monitoring | Identifies abnormal behavior soon after changes are introduced, enabling faster investigation and containment |

| Root cause analysis support | Connects incidents to recent changes so teams can determine underlying causes more efficiently |

Quick-Start Guide

Best Practices to Further Reduce Risk

- Schedule Changes During Off-Peak Hours: Use NinjaOne’s maintenance window settings to apply changes when impact is minimal.

- Document Everything: Use NinjaOne’s reporting tools to log changes, outcomes, and lessons learned.

- Test in a Controlled Environment: Apply changes to a small subset of devices before full deployment.

- Monitor Post-Change: Use NinjaOne’s dashboards to watch for performance issues immediately after deployment.

While no tool can completely eliminate the risk of change-induced downtime, NinjaOne provides the infrastructure, automation, and visibility needed to minimize those risks. By leveraging its backup, monitoring, and change management features, you can significantly reduce the likelihood of outages and quickly recover if issues arise.

Managing change without sacrificing reliability

The risk of downtime from IT changes is never zero in environments where systems constantly evolve to meet organizational demands. With changes that can often expose hidden dependencies, gaps, and weaknesses, organizations must execute adjustments and deployments deliberately through careful planning, testing, monitoring, and governance. With that, organizations can reduce the frequency and impact of unplanned downtime from changes while still making improvements.

Related topics: