The financial and operational ramifications of downtime have become increasingly pronounced over the past seven years. In 2014, Gartner predicted that downtime costs organizations an average of $300,000 per hour. However, recent statistics lie in sharp contrast to this 6-figure estimate, with 44% of organizations now counting their hourly downtime costs at over $1 million – exclusive of the ensuing penalties or legal fees.

Even as cloud vendors and partners promise 99% uptime, the day-to-day reality of this SLA acknowledges that downtime is inevitable – even with perfect 99% uptime, customers must still expect 8 hours of frustration per year. The preventative mechanisms employed by many businesses are ‘out of sight, out of mind;’ however, by ignoring the reality of downtime, the cost of downtime is able to spiral uncontrollably.

What is downtime?

Downtime refers to the period during which a system, service, machine, or operation is unavailable or not functioning at full capacity. Users are left unable to access online services, employee productivity becomes greatly limited, and customers are locked out of interacting with your organization. These ramifications stack on top of one another, snowballing into significant repercussions for an organization’s reputation.

Facebook – now Meta – has a user count in the billions. In late 2021, almost every meta-owned platform went down for over 5 hours. The outage was the result of a change in the configuration of various backbone routers. These routers oversee the flow of network traffic between Facebook’s data centers – as a result, the update unleashed a domino effect. According to Cloudflare, Facebook essentially communicated to the Border Gateway Protocol (BGP) that the routes leading to WhatsApp, Instagram, Facebook, and more were no longer viable. Consequently, individuals attempting to access Facebook were unable to locate the necessary pathway.

In essence, this outage didn’t just render Facebook inaccessible – it led to the disappearance of all services and operations associated with it. This further meant that end-users weren’t the only ones locked out; Facebook’s own internal systems are reliant on the same processes, making it hard for employees to diagnose and resolve the problem. The outage led to a drop in Facebook’s share price by 4.9%, while CEO Mark Zuckerberg’s personal wealth fell by $6bn.

While many organizations choose to ignore the reality of uncontrolled downtime, it can provide a crucial metric for judging the reliability and performance of your organization’s operations.

How downtime happens

Not all downtime costs the same. There are two primary types thereof, each of which must be approached in its own way.

Planned downtime

This is a scheduled interruption of operations or services for maintenance, updates, repairs, or other planned activities. It is typically conducted during non-peak hours to minimize disruption, and end-users are given ample warning time. Planned downtime is a vital part of reducing the following types of downtime.

Unplanned downtime

Unplanned downtime occurs unexpectedly, is disruptive, and can result in major financial and operational losses.

Unplanned downtime can be further broken down into two main forms. The first is caused by human factors. According to a report last year from the Downtime Institute, human error has caused nearly 40% of all major incidents over the last three years. Of these, almost 90% are caused by staff failing to follow protocols – or by flaws in the procedures themselves. For example, protocols governing resource allocation need to be carefully monitored, as underestimated resource requirements can be a fast-track to server overload and failure.

While internal threats wipe millions off yearly revenues, the other more frightening cause of downtime is external. These can span the width of natural disasters like floods that threaten physical data centers to cyberattacks that leverage slight misconfigurations against you. Ransomware is one of this category’s most severe threats, thanks to its ability to completely encrypt otherwise safe backups.

Common causes of downtime

Unfortunately, there are plenty of situations that create unplanned downtime, such as:

- Cyberattacks and data breaches

- Environmental emergencies

- Equipment failure

- Human error

- Networking issues

- Power outages

These issues don’t apply to planned downtime, which often occurs as a result of patching, backup, or other IT processes. When planned downtime occurs, an IT team will notify any affected parties in advance.

Delving into the cost of downtime

The equation governing downtime cost is relatively simple. Finding the true numbers behind each component can represent a far harder challenge. To develop a working equation, let’s take a closer look at each component.

Cost of downtime = lost revenue + lost productivity + cost to recover

Lost revenue

If you’re analyzing a recent outage, the first place to start is identifying which employees were affected and to what extent. Though some employees are able to keep working, on-site technicians and engineers, for example, the vast majority of operational systems now require ongoing internet connectivity. Even in fully automated assembly lines, Internet of Things (IoT) devices are still constantly measuring and supplying the operations teams with real-time efficiency data. Without this, even otherwise offline supply chains may need to be brought to a halt. With minimal employee access to online systems, your company cannot fulfill orders with the same efficiency.

This loss of revenue can become even more severe when applied to critical systems: eCommerce stores that rely solely on an online storefront are completely blocked from revenue generation. By analyzing the sites affected and their role in revenue generation, it becomes possible to pinpoint the daily loss of revenue caused by an outage. This process must be applied to every affected system, and the total amount of loss revenue totted up across all affected systems.

Loss of internal productivity

While systems are down, employees are left twiddling their thumbs – measuring the financial impact of this can seem overwhelming at first. To get a conservative idea of how to value this lost productivity, ask your HR department for a rundown of the average salary across every department affected. Multiplying this by the number of employees gives you the total value of lost employee wages. Added to the lost revenue, this grants you a realistic view of the daily or even hourly losses.

Cost to recover

Not all employees are left idly counting down the hours until they’re back online: some are scrambling to undo the damage and bring everything back up. The cost of these work hours can spiral quickly – especially when IT teams need to extend working hours. Furthermore, depending on the cause of such downtime, further time and money may be necessary to get everything back online. Whether it’s changing a supplier or restoring vital servers, these on-the-fly changes need to be factored into the total cost.

Understand the business impact of downtime clearly—watch this quick video: ‘What is the True Cost of Downtime for Businesses?‘

How to minimize and avoid downtime

While somewhat inevitable, downtime damage can be greatly mitigated with some careful planning.

Implement your disaster recovery plan

The initial phase in the development of a Disaster Recovery Plan (DRP) involves the identification and assessment of potential threats and vulnerabilities that could impact your system administration. These factors encompass hardware malfunctions, power interruptions, cyberattacks, fires, floods, earthquakes, and similar events. Additionally, it’s crucial to establish the Recovery Time Objective (RTO) and the Recovery Point Objective (RPO) for critical systems and data. The RTO signifies the maximum tolerable downtime for your system, while the RPO indicates the maximum allowable data loss. Understanding the difference between RTO and RPO is vital, as these metrics play a pivotal role in prioritizing recovery efforts and selecting appropriate backup and recovery strategies.

With these metrics in place, roles and responsibilities need to be defined across your system administration team and other stakeholders. This involves specifying individuals responsible for initiating, executing, and overseeing the DRP. Furthermore, it involves designating who’s accountable for communicating with both internal and external parties, such as employees, customers, vendors, and regulatory bodies. Given the context of a disaster recovery plan, it’s further essential to define backup personnel and alternatives for each role in case the primary individuals are affected.

Invest in reliable backup systems

While local data backups are useful, be aware that relying solely on this form of backup leaves you vulnerable to physical threats such as fire, flood, and theft. The importance of backups, and the role they play in faster recovery, cannot be overstated. This is why it’s recommended to have at least three copies of your data – one of them stored outside of your normal IT infrastructure.

Cloud backup vendors are uniquely positioned to weather downtime, thanks to the ease of scaling and using multiple instances. Consider a Kubernetes setup within the AWS cloud infrastructure, consisting of two nodes. This setup includes an autoscaling trigger that detects when CPU utilization remains consistently above 75% and adds additional pods.

Furthermore, having multiple nodes ensures that your application stays up, even if a segment of the cluster experiences an outage. Kubernetes strives to evenly distribute pods across the cluster’s instances. However, it’s imperative that greater resources are allowed to arrive promptly and not when the user experience has already started to suffer.

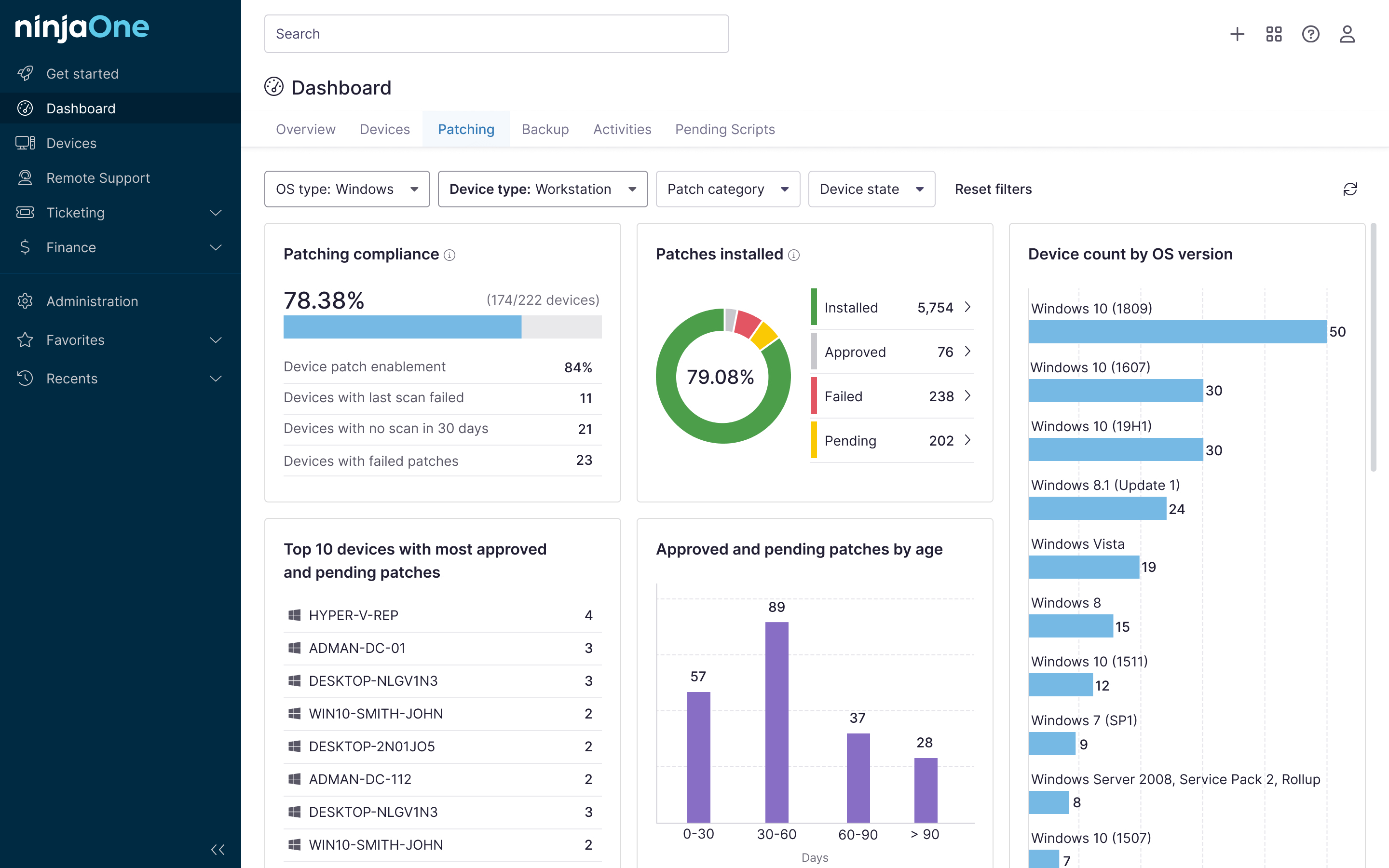

Monitor and protect

Touched upon in the last point, endpoint monitoring is a major pillar of downtime prevention. Not only do alerts give you real-time insight into any violated policies and resource disputes, but they also offer a front-row seat to your overarching security. Firewalls and blacklists help stop attackers from breaking and entering, while AWS’ built-in Web Application Firewall (WAF) alerts you to any ongoing DDoS or attempted SQL injection attacks. These real-time notifications can then be directed to your email or phone, allowing for rapid preventative action.

The true cost of downtime is too high

Resilience is central to reducing the spiraling cost of downtime. NinjaOne supercharges enterprise IT services by proactively identifying endpoint issues and providing a platform for automatic remediation. Files can be restored in a variety of ways, from end-user self-service file recovery to web-based file restores – helping minimize technician workload at a time they’re most needed. From ticket to backup, Ninja supports every layer of downtime protection.