Key Points

- Shadow AI is the unmonitored use of AI tools that can create visibility gaps across the environment, so AI adoption must align with security and compliance policies.

- Unmanaged AI usage expands the attack surface by exposing sensitive data, particularly on platforms without clear processing and retention policies.

- The compliance challenges that come with shadow AI include obscured data residency, undermined access and retention policies, and audit blind spots.

- Shadow AI introduces compliance challenges by obscuring data residency, undermining access/retention policies, and creating audit blind spots that can incur penalties.

- Blocking AI tools entirely usually fosters underground usage, reduced transparency, and increased friction between IT teams and end users.

- Effective AI governance requires clear usage policies, approved and vetted platforms, employee education, and continuous visibility into AI adoption patterns.

Artificial intelligence (AI) offers a wide array of services that streamline workflows and automate tasks, improving efficiency and productivity. AI adoption has been steady in modern workflows, but in many scenarios, it’s happening faster than what organizations can effectively handle.

The usage of unapproved AI platforms outside an organization’s monitoring scope introduces shadow AI risk. When left unmanaged, these risks gradually create security and operational risks that can push environments out of compliance.

What is shadow AI and its hidden risks for organizations

Shadow AI, a subcategory of shadow IT, refers to the unauthorized use of AI platforms or tools within an organization. Since a large number of AI platforms are cloud-based and are easily accessible, users can sign up for an account within minutes, without ever informing administrators.

In many environments, users independently adopt AI tools to streamline workflows. Without proper oversight, these tools can quietly be incorporated within business operations, bypassing required vendor risk assessments and security reviews.

As shadow AI adoption increases, so does organizational risk. For instance, employees may enter classified company information into AI platforms without understanding how it processes data. This lack of oversight expands an environment’s attack surface, increasing the likelihood of data exposure.

What starts as an efficiency hack evolves into unmanaged risk, especially when administrators are unaware of their existence. Without clear governance policies in handling AI usage, shadow AI can rapidly expand across your environment before you can recognize its impact.

Compliance risks introduced by shadow AI usage

Adherence to regulatory frameworks doesn’t just end when security controls are in place. Organizations are responsible for knowing where their protected data resides and how it’s processed, as compliance frameworks require visibility and control.

When shadow AI workflows operate outside existing controls and monitoring workflows, organizations risk noncompliance. Understanding the potential governance blind spots shadow AI creates is key to balancing AI usage and compliance.

Shadow AI obscures risks

Many AI platforms are cloud-based, providing them with the flexibility to process data across various regions. Without a formal IT review, you’re left blindsided on where shadow AI tools submit and physically store your data.

Shadow AI also blurs the retention period of your data and whether it is logged or stored for any subsequent processing. Unclear data residency and transfer practices make it harder for organizations to demonstrate compliance with regulations that observe strict data handling requirements.

Undermining access and retention policies

Numerous compliance frameworks require organizations to determine who can access sensitive data and how long it can be retained. Handling data outside identity management systems and data lifecycle management controls weakens your governance strategy.

For instance, employees may input confidential data into AI platforms that don’t comply with your organization’s retention schedules or access controls. This makes it challenging to enforce consistent data handling standards across your organization.

Creating audit blind spots

When AI platforms are adopted without approval, you can’t ensure their monitoring and reporting capabilities meet compliance standards. Audits demonstrate your organization’s ability to comply with all the necessary regulatory frameworks that apply to your environment. Inability to prove due diligence can create audit gaps that surface only during regulatory reviews, increasing the risk of penalties and fines.

Why blocking AI tools is rarely an effective strategy

Shadow AI is a visibility problem, and solving it requires balancing adoption and monitoring to boost productivity without compromising IT compliance posture. However, a typical knee-jerk reaction when an organization first spots shadow AI is to prevent user access to unapproved tools entirely.

While this approach can reduce risk in the short term, a blanket ban on AI usage can create new challenges for IT teams.

Drives AI usage further underground

One of the main reasons why employees leverage AI tools is due to the efficiency gains they provide. Restricting platform access without clear alternatives doesn’t reduce shadow AI; it becomes less visible.

For example, employees may opt to use their personal devices or switch to the use of mobile networks in place of corporate Wi-Fi. This drags AI usage further from the boundaries of existing monitoring frameworks.

Reduces transparency

If employees feel that AI usage in their workplace is taboo, they are less likely to disclose which tools they use and how they use them. This normalizes shadow AI in an environment where data sharing practices are kept secret, widening the gap between AI adoption and oversight.

Creates unnecessary friction between IT teams and employees

For IT teams, unauthorized usage of AI tools is a threat that can slowly compromise a managed environment’s security posture. On the flipside, users see AI platforms as productivity-boosting tools. When IT teams implement strict blocking guidelines, this viewpoint discrepancy between both parties can create tension.

Responsible shadow AI governance mitigates risks

Governance strategies shouldn’t focus on eliminating AI platform usage in an attempt to curb shadow AI. Instead, the objective should be to ensure adoption aligns with existing security and compliance guardrails.

Shadow AI risk cannot be eliminated entirely as organizational needs and AI capabilities innovate. Managing AI adoption should be an ongoing effort that adapts as workflows evolve.

Establish clear AI usage policies

Without clear guidance, employees are left to determine for themselves what constitutes acceptable AI usage. Creating a formal AI usage policy reduces ambiguity and ensures users share a consistent understanding of the pitfalls associated with shadow AI.

A strong AI usage policy should include:

- Data classification guide: Define which data is allowed and prohibited from being entered into AI systems.

- Approval requirements: Specify the compliance and security requirements a platform must have to foster safe adoption.

- Documentation and logging: Outline expectations for recording AI usage to preserve audit trails and strengthen documentation.

- Noncompliance penalties: Specify disciplinary actions or corrective measures if AI tools are used outside approved data handling standards.

- Usage rules: Clearly state whether public AI platforms are allowed or prohibited, including certain conditions where use is acceptable.

💡 Note: Policies should support and align with real-world workflows and use cases rather than being purely restrictive.

Provide approved and supported AI tools

To minimize shadow AI, IT teams should provide users with vetted AI solutions that serve as an alternative to unapproved tools. Before deployment, it’s important that AI tools undergo:

- Security reviews: Close evaluation of vendor security controls and incident response practices before approval.

- Data residency validation: Verify where data is stored and processed to ensure alignment with data location compliance.

- Data handling review: Review data processing agreements to clarify retention policies and model training usage of entered data.

- Integration with identity and access controls: Check if the AI platform supports centralized authentication and role-based access.

By offering end users a supported alternative, you disincentivize the usage of unapproved AI platforms. In addition, this helps you maintain visibility and ensure compliance without hampering your organization’s productivity.

Ongoing training regarding acceptable data use

Education plays an important role in transforming AI governance into a shared responsibility between administrators and end users. Awareness regarding data sensitivity and AI-related exposure risk helps employees understand and comply with AI restrictions.

Continuous visibility into AI adoption patterns

AI platforms are evolving at an exponential rate, and this rapid change can influence the usage patterns of end users. That said, AI governance shouldn’t be a one-time effort; it should adapt as new tools and use cases surface.

Administrators should monitor which AI tools are gaining traction within their environment, and periodically assess whether new usage patterns introduce additional security or compliance concerns.

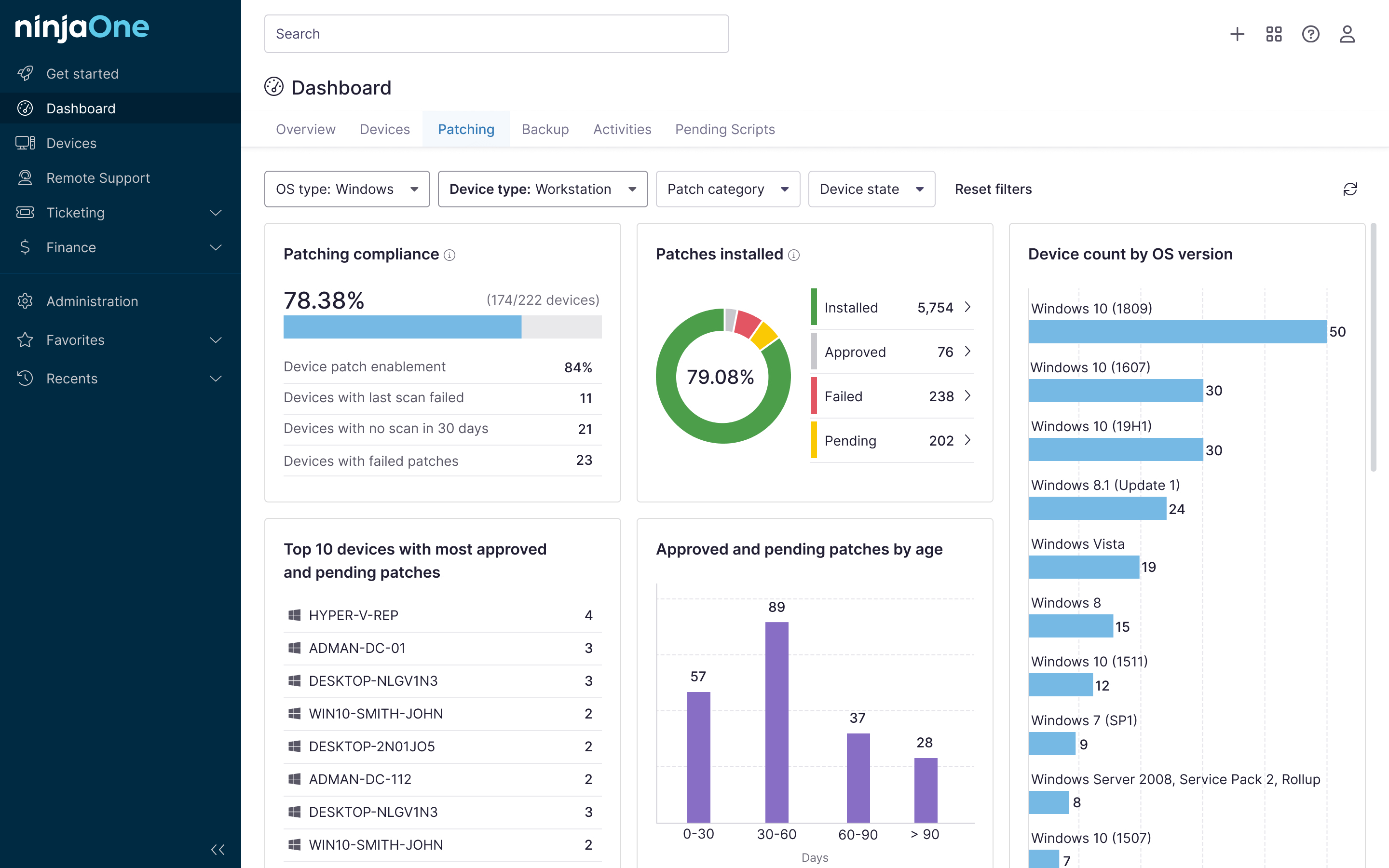

Simplify shadow AI risk mitigation and governance with NinjaOne

NinjaOne IT Asset Management helps organizations identify installed AI tools and applications across the environment, providing visibility into potential unauthorized usage. NinjaOne helps IT teams define approved AI tools and enforce governance standards through its policy enforcement capabilities, helping admins deploy approved AI solutions consistently across the environment.

Balance adoption and visibility to minimize shadow AI risk

AI’s rapid growth has produced different services that maximize productivity across everyday workflows. As AI capabilities expand and innovate, it becomes a convenient tool for end users to meet workplace demands quickly and efficiently.

The fast-paced adoption of AI tools can outpace monitoring and approval processes, causing employees to use unapproved solutions. By establishing acceptable usage policies, providing approved alternatives, educating employees, and maintaining ongoing visibility, organizations are better positioned to reduce risk without stifling innovation.

Related topics: