Perfect security policies don’t exist not because they are difficult to write, but because they poorly reflect reality. There’s a big difference between a written policy and a written policy that is enforceable.

Most policies are not designed to withstand the fast-paced, changing landscape that we find ourselves in. These policies are written with good intentions but break down when written for “ideal” states and perfect scenarios.

Why good policies fail in practice

Back when I was a sysadmin and in charge of patch management, I fell into this exact trap. I had implemented what seemed like a straightforward policy: all critical patches must be deployed within 30 days of release. It was a clear metric, easy to measure, and most importantly it aligned with the regulatory and compliance requirements that we needed to satisfy.

On paper, it was great and exactly what good security should look like. Or was it? Well, in practice, it fell apart almost immediately.

Since I was also part of the team responsible for tracking and installing patches across our endpoints and servers, I had inadvertently just consumed all my free time. By the time I had identified what needed patching, when, tested within IT (we were always the guinea pigs), scheduled maintenance windows for the rest of the organization, and then deployed the patches, almost 30 days had already passed. Then the cycle would repeat. Leaving me with no time to do anything else other than babysit patches.

To add insult to injury, we would have a penetration test performed, and the findings from the pentest were very much different from those from our vulnerability scans. The pentesters urged us to fix the findings they found, but some were not rated “Critical.” So those high-risk findings didn’t get remediated for some time after the Critical findings.

This wasn’t a failure of intent. I wasn’t wrong to want timely patching. The policy failed because it didn’t account for the actual environment, the true risk to the organization, or competing priorities. This is where most security policies break down. They’re designed for perfect conditions that don’t exist in real IT environments, and they are often void of real risk-based decisions. Ultimately, policies like this are not followed, they are circumvented or disregarded all together.

It’s not just homegrown policies that fall into this trap. Even widely accepted industry standards can fail when applied without understanding the specifics of your organization and environment.

When “industry standard” stops being useful

Industry standards exist for good reasons. They are based on lessons learned from security incidents and data breaches, and they represent collective expertise from security professionals. They provide a baseline that works for most organizations most of the time. The problem is that “most organizations most of the time” still leave a lot of room for failure.

An IT team at a manufacturing company learned this the hard way when they decided to harden their Windows servers using CIS Level 1 benchmarks. CIS Benchmarks are great starting points. They are widely respected, regularly updated, and recommended by auditors and security professionals. This was not the problem.

The problem was thinking these could be consistently applied to every system in their environment. Except that their production floor control systems are not typical end-user systems. They have a specific purpose and have specific requirements that differ from environments like accounting, for example.

The benchmarks disabled several legacy protocols and services that their 15-year-old industrial equipment relied on to communicate with the server. Unfortunately, this caused production to be down for most of the day (6 hours) while they frantically rolled back the changes. The financial impact was not insignificant. Not going out of business impact, but it caused quite a bit of concern and chaos within the IT and manufacturing teams.

Applying CIS benchmarks was not the wrong approach. They are designed for modern IT environments with current operating systems and updated equipment. But they are a starting point, and every setting within the benchmark may or may not be ideal for an operational technology environment where upgrading is much more difficult and downtime can cause serious financial impact.

This is where blindly following “industry standards” can hurt you. These benchmarks are built on assumptions about your environment, your resources, and your operations. When those assumptions don’t match your actual environment, whether because you’re running legacy systems, operating in a specialized industry, or working with other constraints (that the benchmarks don’t account for) blindly following best practices can create more problems than it solves.

Benchmarks are a useful tool and should be used. However, too often policies are written to mandate the use of these benchmarks to check a compliance box, without first understanding the specific requirements of your environment.

How to design policies that work in reality

After my original 30-day patching policy failed, my team and I redesigned it around outcomes instead of timelines. Our new policy was focused on risk. There were many addendums, but this one should drive the point home the most:

“Critical vulnerabilities affecting internet-facing assets with evidence of active exploitation must be mitigated within 72 hours. Critical vulnerabilities with available proof-of-concept code must be patched within 7 days. Mitigation may include patching, compensating controls, or temporary service isolation. All actions and exceptions must be documented with risk acceptance from the CISO.”

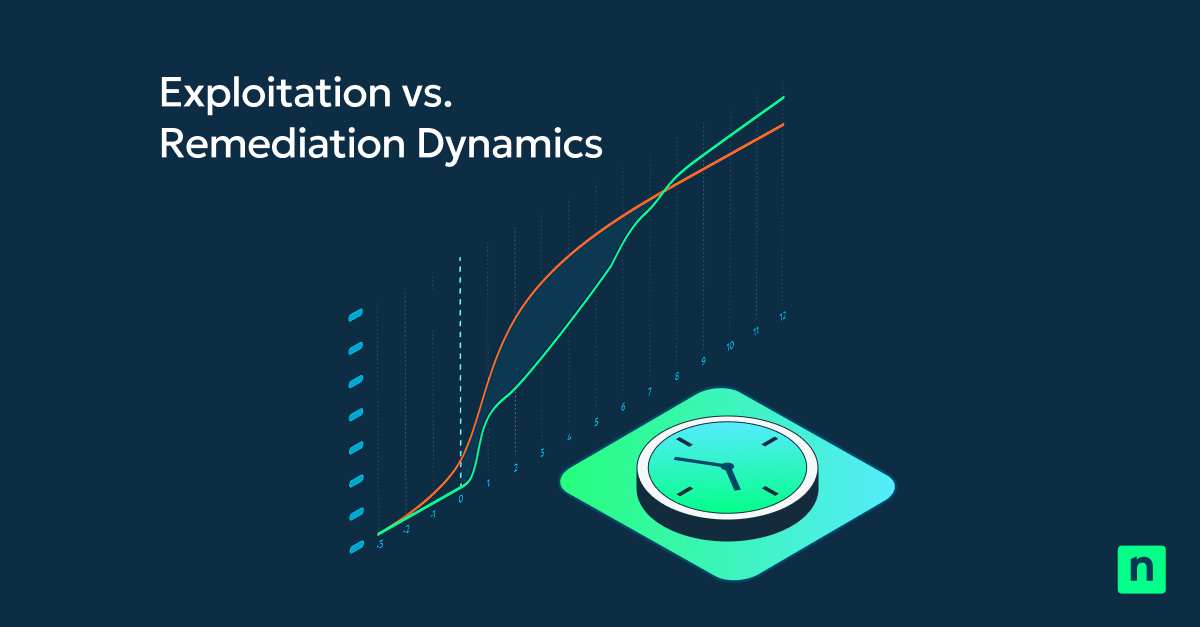

The real change wasn’t just the timelines. We prioritized vulnerabilities and patching based on actual exposure: whether systems were internet-facing, if exploits were available in the wild, and what data those systems touched.

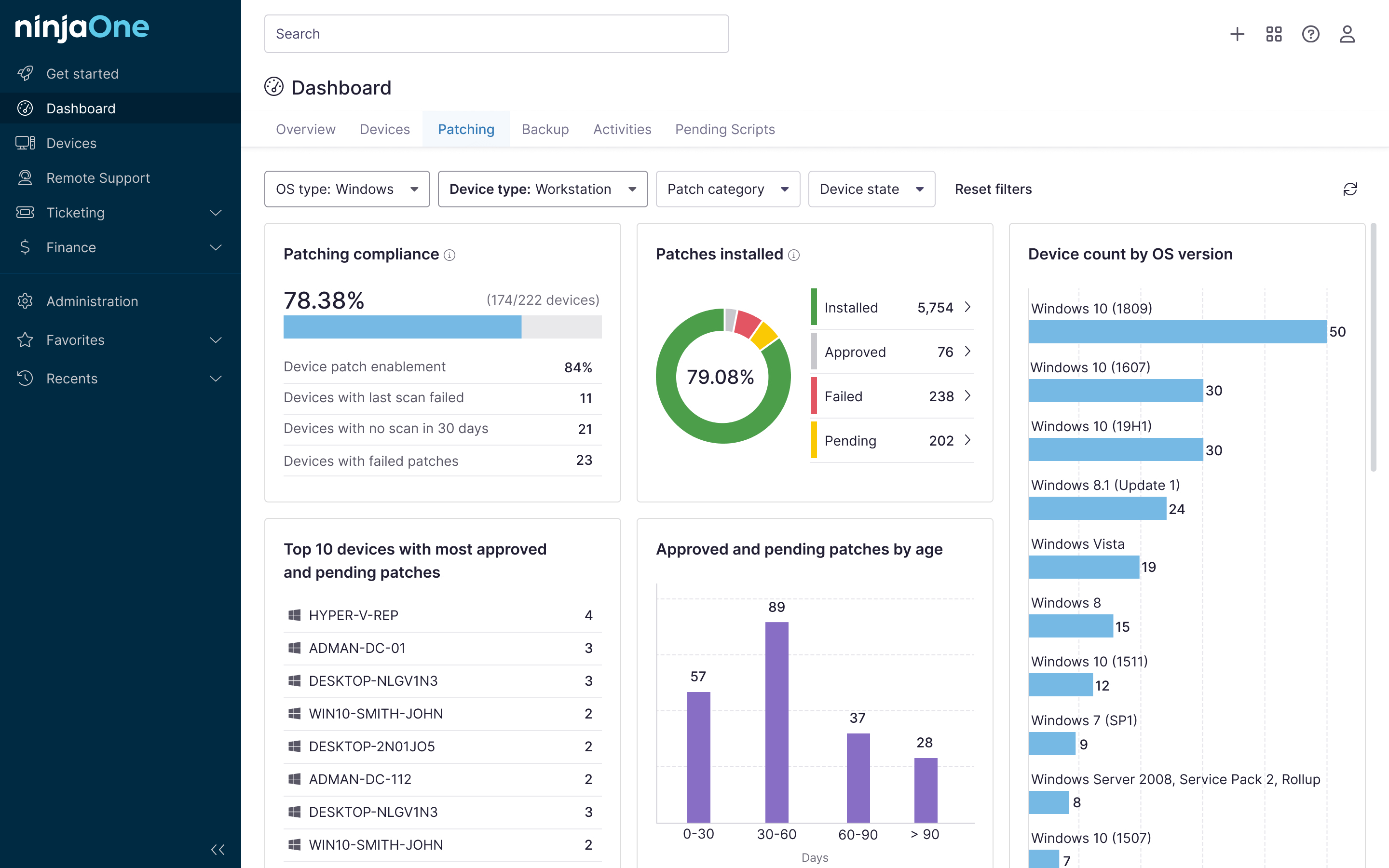

But just as importantly, we understood that visibility is critically important for IT. We deployed automated vulnerability scanning that fed directly into our patch management dashboard. When systems fell out of compliance, and were missing critical patches, we knew immediately. We began being able to track our metrics overtime to see how well (or not) we were performing, and what we needed to improve on.

We also acknowledged that sometimes patching within the timeline wasn’t possible, for a variety of different reasons. In those cases, we needed compensating controls, we needed documentation, and we needed a sign off by leadership.

In the case of the CIS Benchmarks policy, the lesson was similar, but the IT team came at it from a different angle. They didn’t abandon the benchmarks completely or remove them from their policy. Instead, they updated the policy to require CIS hardening assessments for each type of system in their organization, to establish a baseline of what benchmarks would and would not break production components. The IT team documented which benchmarks applied to each system type, which conflicted with the requirements for that system type, and any compensating controls that were used. The manufacturing systems got their own hardening baseline while end-user systems got their own and servers got their own.

Where to go from here

As we know, just about nothing in IT/Security is ideal. Innovation and technological advancement will always outpace security.

Our job as IT/Security professionals is to make the most of what we have available to us, to design secure enough systems and processes and policies that allow users and departments and functions of the business to operate as safely as we can without hindering those operations too drastically.

We have to accept that perfect security doesn’t exist and understand that policies that demand perfection will fail.

Security works best when it’s part of how IT operates every day, not something that’s been bolted on afterward. Security works best when it’s the most efficient and ideal option. When it doesn’t get in the way, as much as possible.

If your team has to choose between getting work done and following a security policy, they’ll choose getting work done every single time. Design your policies so those two things are the same choice.