Key Points

- Normalize Your Firewall Logs: Create a consistent schema across all vendors so your searches, dashboards, and alerts work everywhere.

- Baseline Normal Behavior: Measure typical allow and deny activity by zone, service, and tenant to identify true anomalies faster.

- Focus on High-Signal Detections: Prioritize precise, context-rich alerts that point to real changes or threats instead of generating noise.

- Correlate Across Data Sources: Link firewall events with host telemetry, user identity, and configuration changes to reveal the full story.

- Prove Outcomes Regularly: Deliver monthly evidence packets summarizing coverage, accuracy, and improvements to sustain stakeholder confidence.

Most IT teams collect thousands of firewall event logs every day, but few turn that data into real insight. Between vendor quirks, massive log volumes, and alerts that lack useful context, it’s easy for valuable information to get buried.

This guide simplifies firewall event analysis into clear, practical steps that anyone can follow. You’ll learn how to normalize your logs, build meaningful baselines, and separate the signals from the noise so you can catch misconfigurations and threats before they spread.

How this guide works

Firewall analysis isn’t a single, linear process. It’s more like tuning a living system. You’ll collect logs, define schemas, set baselines, and refine your alerts over time.

That’s why this guide uses 10 practical steps rather than strict stages. You can follow them in order when starting out, or jump ahead to what fits your maturity level. Each step builds toward a program that’s consistent, accurate, and audit-ready, across any vendor or tenant.

Prerequisites

Before diving into firewall event analysis, take a moment to prepare your environment. Having the right structure in place will make the process smoother and ensure your insights are accurate.

You’ll need:

- An inventory of firewalls, zones, and logging capabilities for each tenant or network segment.

- A centralized log pipeline or SIEM with lifecycle policies and basic enrichment.

- A minimal, agreed-upon event schema for all firewall logs.

- An evidence workspace for configuration diffs, dashboards, alert cases, and monthly reports.

Step 1: Define a minimal cross-vendor schema

Think of your schema as a universal translator for firewall logs. Different vendors, such as Fortinet, Cisco, Palo Alto, and Windows Defender, use unique field names and formats. Without a schema, your searches and dashboards won’t scale.

Suggested actions:

- Define stable keys: timestamp, device, zone_in, zone_out, src_ip, dst_ip, src_port, dst_port, proto, action, rule_id, session_id, bytes, and duration.

- Map vendor-specific fields to these keys during ingestion.

- Reject or reroute malformed events and keep your mapping table in version control.

This normalization step enables all subsequent analysis to be reusable and comparable, especially across multi-tenant environments.

Step 2: Collect the right event classes

Too many teams log only traffic allows or denies, missing the operational story in between. To truly understand behavior, collect the full picture.

We suggest including:

- Allow and deny traffic logs

- System change logs (firmware, rules, policy updates)

- NAT translations

- Rule creation, modification, and deletion events

💡Tip: Capture summaries of drops from flood protection in a separate stream to control storage cost. It’s better to summarize flood activity than drown your SIEM in redundant packets.

Step 3: Enrich for faster triage

Raw logs tell you what happened, while enrichment tells you why. Add context so your analysts can identify outliers without opening a dozen dashboards.

Add attributes such as:

- GeoIP and ASN lookups for external IPs

- Internal asset tags or ownership data

- User identity and change ticket IDs

- Normalized service names (e.g., map port 443 to HTTPS)

Step 4: Establish baselines by zone and service

Once your logs are normalized and enriched, learn what “normal” looks like.

Start by measuring:

- Top talkers and destinations

- Common services per zone (e.g., DNS from LAN to WAN)

- Typical allow-to-deny ratios

- Hourly and daily patterns

💡Tip: Save baseline snapshots monthly so you can compare before and after configuration changes or incidents. Over time, these baselines reveal deviations that highlight drift, attacks, or misconfigurations before they escalate.

Step 5: Build high-signal detections

Focus on signals that truly indicate change or risk.

Examples include:

- Sudden deny spikes for a service that’s usually allowed

- Repeated denies followed by a new allow on the same rule

- Outbound allows rare or risky ASNs

- Management-port allows from untrusted zones

- Large sustained egress bursts

Each alert should link to a short runbook and example query. Aim for precision over quantity: A few high-fidelity alerts beat hundreds of noisy ones.

Step 6: Detect policy drift and misconfiguration

Early drift detection keeps your rule base clean and prevents accidental exposure.

To detect drift:

- Track rule hit counts and zone assignments.

- Flag any rule that grows in scope or never gets used.

- Alert when an allow suddenly appears before an expected deny (policy inversion).

💡Tip: Read Tufin’s Best Practices for Firewall Logging for guidance on rule hygiene and change tracking.

Step 7: Control noise and storage cost

Firewall logs are notoriously noisy. Conditional logging and smart lifecycle management keep your SIEM healthy.

Best practices include:

- Enable conditional logging only for dynamic or risky traffic.

- Aggregate repetitive floods or scans into summaries.

- Rotate and compress old indexes.

- Implement warm and cold storage tiers with automated retention.

- Track parse success and ingestion latency as first-class metrics.

Step 8: Correlate with hosts, identity, and change

Firewall events make more sense when seen alongside host telemetry and identity data.

Correlate connections with:

- Endpoint logs (like Windows Defender or EDR)

- Authentication activity (Active Directory, Azure AD)

- Recent configuration or change tickets

Step 9: Manage exceptions with expiry dates

Every environment needs temporary allows or suppressed alerts. The trick is to make them expire automatically.

When approving exceptions:

- Record the owner, reason, and compensating controls.

- Assign an end date, and review weekly.

- Require a second approver for long-term exceptions.

This keeps temporary workarounds from becoming permanent blind spots.

Step 10: Publish a monthly evidence packet

Finally, close the loop by proving control health to stakeholders and auditors.

A one-page monthly packet per tenant should include:

- Coverage and parse success rates

- Top alerts with precision notes

- Policy drift findings and outcomes

- Storage usage vs. budget

- Two-sample investigations with artifacts and resolutions

This habit demonstrates measurable improvement and readiness for audits or QBRs.

Best practices summary table

| Practice | Purpose | How it helps you |

| Use a common schema across vendors | Standardize log fields and formats so data from different firewalls can be compared directly. | Makes your firewall event analysis consistent, letting you reuse dashboards, detections, and queries instead of reinventing them for every platform. |

| Baseline traffic by zone and service | Understand what “normal” looks like in each network segment and time window. | Reduces false positives by helping you tell expected patterns from real anomalies, so you can focus on meaningful security events. |

| Track rule drift and hit counts | Detect when rules expand, change zones, or stop being used. | Prevents configuration bloat and accidental exposure, keeping your policies aligned with your actual network behavior. |

| Correlate with hosts and identity data | Combine firewall logs with endpoint and user information. | Reveals who or what triggered an event, helping analysts investigate faster and avoid chasing harmless noise. |

| Deliver a monthly evidence packet | Summarize performance and findings in a single, auditable view. | Demonstrates continuous improvement, builds stakeholder trust, and simplifies reporting for audits or QBRs. |

Automation touchpoint example

By setting up small recurring checks, you can spot drift early, control data growth, and show measurable improvements without constant manual work.

Here’s how to think about it in practice:

Nightly tasks:

Every night, your automation should do a quick health check on your firewall logs.

- Confirm that log parsing is working correctly. If logs aren’t being parsed, your analysis can’t be trusted.

- Check ingestion latency (how long logs take to reach your SIEM).

- Track deny/allow ratios by zone. Sudden spikes can signal attacks or misconfigurations.

- Compare rule hit counts from the previous day to spot sudden surges or drops.

If something looks off, have your system open a task or alert automatically.

Weekly tasks:

Once a week, automation should help you zoom out a bit and ensure the system is still balanced.

- Take a snapshot of your baselines. This preserves a “before” picture for long-term comparison.

- Review suppressed alerts and temporary exceptions to see if they’re still justified.

- Validate that your enrichment sources (GeoIP, asset tags, etc.) are still updating correctly.

Monthly tasks:

At the end of the month, it’s time to turn all that automated monitoring into something your team (and management) can actually use.

- Compile key charts and summaries (coverage, top alerts, storage use).

- Highlight policy drift findings, e.g., which rules changed and why.

- Include at least two resolved alert timelines to show how detection led to real action.

- Package it all into a one-page evidence packet for leadership or clients.

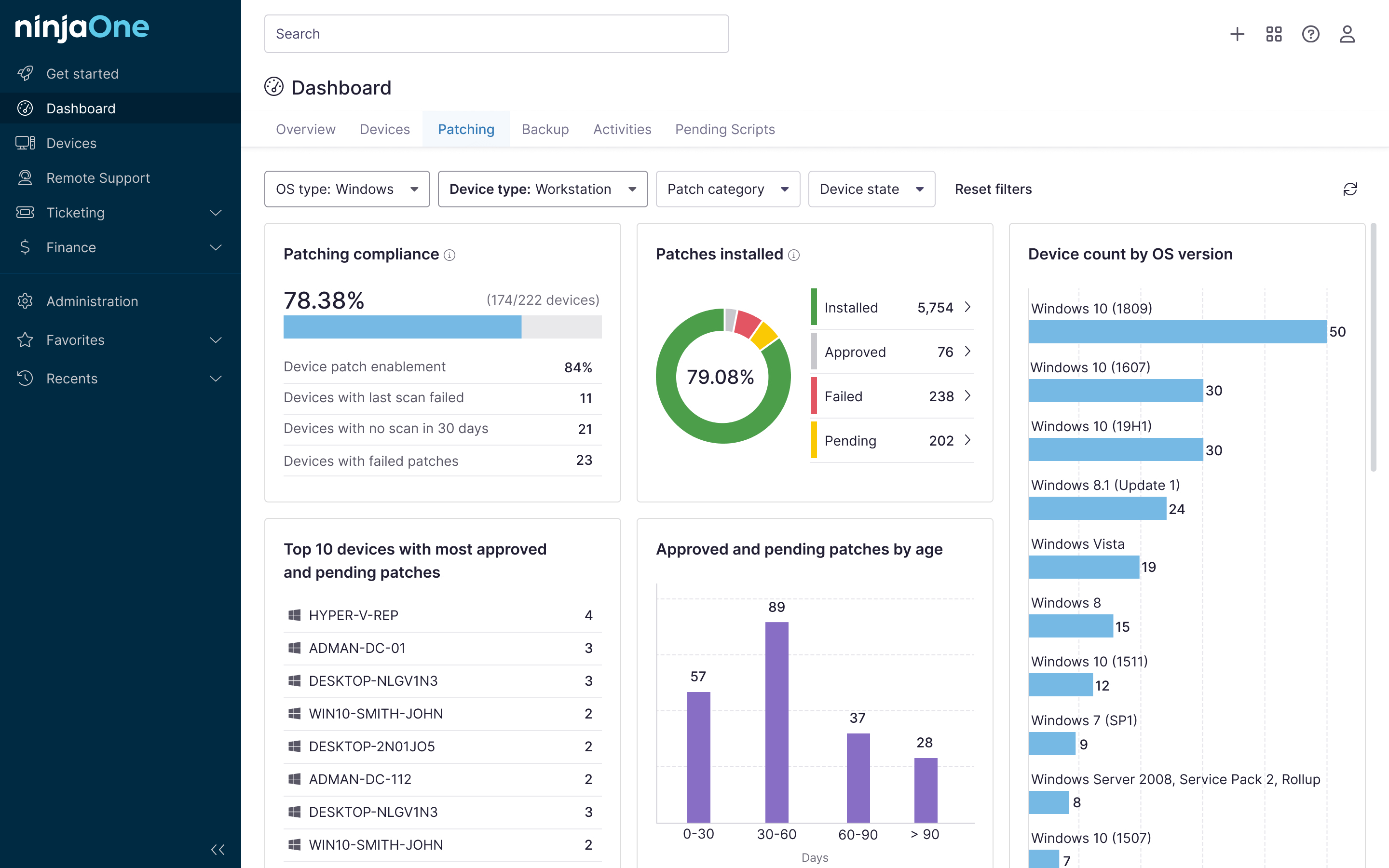

💡Pro Tip: If you use an IT management platform like NinjaOne, you can schedule these checks as automated tasks.

Analyze firewall allow and deny events

Firewall logs become truly valuable when you can normalize, baseline, detect drift, and correlate context, not just collect them.

By applying these steps, your firewall event analysis evolves from reactive troubleshooting to proactive assurance. You’ll catch policy drift early, cut through noise, and give leadership tangible proof that your network defenses actually work.

Key takeaways:

- Normalize vendor logs to a minimal, reusable schema

- Baseline allow and deny behavior by zone and service

- Build a handful of high-signal detections with runbooks

- Correlate events with host and identity data to close cases fast

- Prove value monthly through concise, evidence-based reporting

Related topics: