Key Points

- Enable and Secure S3 Access Logging: Activate logging for all backup buckets and centralize logs in a restricted bucket with lifecycle retention and cost controls.

- Map IAM Identities and Approved Resources: Normalize IAM roles, AWS accounts, and backup prefixes into readable mappings to simplify analysis and ensure accurate audit attribution.

- Create and Reuse Standard Log Queries: Build baseline queries for top requesters, unauthorized prefixes, deletes, and large uploads to validate activity and detect anomalies.

- Verify Backup Windows and Detect Drift: Verify backup windows by filtering logs by time and role; automate recurring checks to flag drift, unexpected access, or cross-account activity.

- Cross-Reference S3 Logs with AWS CloudTrail: Combine object-level access data with CloudTrail’s control plane insights to verify permissions, policy changes, and end-to-end compliance coverage.

- Document Findings and Publish Monthly Evidence: Summarize anomalies, include concise narratives, and compile standardized reports that prove backup integrity, compliance, and accountability.

Amazon S3 server access logs offer a granular view into every object-level operation, revealing who accessed data, when, from where, and how. These logs must be properly utilized by managed service providers (MSPs) and IT teams responsible for verifying backup and ensuring compliance.

In this article, we present a practical, tool-agnostic workflow for transforming raw S3 access data into verifiable evidence of backup integrity and access boundaries, thereby replacing manual spot checks with systematic proof.

Steps to read S3 access logs to prove backup compliance and access boundaries

Before proving backup compliance and access boundaries with server access logs in Amazon S3, you must first understand how to collect, interpret, and validate this data systematically. To help you, here are some steps that outline a repeatable, end-to-end approach for enabling S3 logging, parsing results, correlating activities with expected behavior, and packaging findings into audit-ready summaries that demonstrate both control and accountability.

📌 Prerequisites:

- Bucket inventory with owners, expected prefixes for backups, and approved IAM (Identity and Access Management) roles

- A destination bucket for logs with lifecycle and access controls applied

- Access to a query method, such as Athena or your preferred log platform

- A workspace for storing saved queries and monthly evidence packets

Step 1: Enable S3 server access logging correctly

When configured properly, an enabled S3 server access logging records detailed information about each request made to your bucket, including the request type (such as PUT, GET, or DELETE), requester, timestamp, and other metadata. This provides the raw evidence to prove compliance and detect anomalies.

Key actions:

- Turn on logging for each backup target bucket.

- Send logs to a dedicated log bucket separate from the source bucket.

- Restrict access so only approved IAM roles or administrators can read or modify the log bucket.

- Apply lifecycle rules to automatically archive or delete old logs based on retention policies.

- Use clear prefixes (e.g., /logs/bucketA/) to organize logs per source bucket.

- Document the configuration, including log destination, prefixes, and IAM settings.

- Verify activation by noting the first timestamp when log files begin to appear.

Step 2: Normalize identities and resources

Raw S3 logs contain technical identifiers, such as IAM role ARNs and account numbers, that can be difficult to interpret. So after enabling logging, make your data human-readable and audit-ready.

Key actions:

- Create a mapping table that links IAM roles, AWS account IDs, and backup service identifiers to clear, friendly names (e.g., BackupRole_TenantA).

- List approved buckets and prefixes for each tenant or client to define valid data boundaries.

- Use this mapping to drive your queries and translate log entries into understandable outputs.

Step 3: Build baseline queries for common questions

Now, you must standardize your analysis with a small set of reusable queries. This lets you answer compliance and boundary questions quickly and consistently across tenants.

What to prepare and save:

- Top requesters by principal

- Unauthorized prefixes accessed

- DELETE and Multi-Object Delete counts

- Large object PUTs in business hours

- Errors and unusual status codes

- Cross-account access

Implementation tips:

- Use Athena or your log platform to point to the log bucket and partition by date.

- Parameterize queries by allowing inputs for tenant, time window, and bucket to reuse across multiple clients.

- Store and version your queries in a shared workspace or Git repository.

- Save small CSVs and screenshots of sample outputs for training and S3 access audits.

Step 4: Verify a backup window with evidence

You must confirm that backup activity occurred as expected by filtering logs to the defined backup window and checking that only expected actors touched only approved data.

Key actions:

- Filter logs to the backup time range and approved prefixes.

- Confirm IAM role matches the authorized backup service.

- Check request types (mostly PUT, minimal GET or DELETE).

- Review status codes for errors or anomalies.

- Compare counts with backup reports for consistency.

- Export samples (CSV or screenshots) as visual proof.

Step 5: Detect drift and risky behavior

After ensuring regular verification, automate checks to catch unexpected activity early. These drift and anomaly detections can help maintain compliance and prevent silent data exposure.

Key actions:

- Run checks daily or weekly to stay current on access patterns.

- Flag unapproved prefixes or cross-account access in your queries.

- Watch for spikes in DELETE operations or mass object removals.

- Identify unexpected user agents that differ from known backup tools.

- Log anomalies with timestamps, affected buckets, and IAM roles.

- Open investigation tickets and assign clear owners and due dates.

Step 6: Cross-check with CloudTrail

S3 access logs show data activity, but CloudTrail records control plane actions. Together, they provide complete visibility. Cross-checking both ensures that changes to configurations and permissions align with expected behavior.

Key actions:

- Review CloudTrail events for bucket policy edits, IAM role updates, or replication changes.

- Compare control actions with S3 access logs to confirm consistent activity and authorization.

- Spot discrepancies such as new principals, modified policies, or unexpected API calls.

- Reconcile findings by documenting the cause and outcome of any mismatch.

- Attach both views (S3 access and CloudTrail summaries) in your monthly evidence packet to prove complete coverage.

Step 7: Triage and close with a short narrative

When anomalies or exceptions are discovered, wrap up each case with a brief, clear summary that provides context without overwhelming readers and helps demonstrate accountability and closure.

Key actions:

- Summarize the issue in one or two sentences, stating the cause (e.g., configuration drift, expired role, manual test) and describing the resolution (e.g., what action was taken or verified).

- Maintain a simple tone, free from technical jargon, for executive readers.

Step 8: Publish a monthly evidence packet

Finally, you want to end each reporting cycle by compiling your findings into a clear, repeatable summary. This will serve as tangible proof of compliance and help clients or auditors quickly understand system integrity.

Key actions:

- Create one packet per tenant to separate evidence and maintain clarity.

- Include a one-page summary highlighting key results and trends, including:

- Logging status to confirm all buckets are properly monitored.

- Query snapshots and small CSV or screenshot samples as evidence.

- Principal-to-role mapping for clear identity attribution.

- Anomalies found and resolved with short narratives.

- Outline next actions, such as policy updates or follow-up checks.

- Keep the format consistent each month to make reviews and audits faster.

Best practices summary table

Following these best practices ensures that your S3 server access log process remains consistent, secure, and audit-ready.

| Practice | Purpose | Value delivered |

| Dedicated log bucket with lifecycle | Protects evidence and manages storage costs | Durable, low-noise historical record |

| Saved queries for common checks | Speeds up investigations and reviews | Consistent, repeatable validation steps |

| Identity mapping table | Clarifies who owns and accesses data | Faster ownership and issue resolution |

| Dual view with CloudTrail | Verifies both control and data plane activity | Complete, trustworthy coverage |

| Monthly evidence packet | Summarizes key findings and actions clearly | Builds client trust and simplifies audits |

Why reading server access logs in S3 matters

Reading S3 server access logs matters because they provide a detailed, timestamped record of every action taken on your storage, including who accessed what data, when, and from where. This level of detail is crucial in proving backup compliance and identifying unauthorized access. While GUI explorers can show you what’s currently in a bucket, they only provide snapshots, not history or context. Without logs, teams can’t verify backup activity, detect drift, or confirm that access boundaries were respected.

Key reasons why it matters to MSPs and IT teams:

- Proves compliance by offering objective evidence for audits and QBRs.

- Detects anomalies, such as unexpected access, deletions, or missed backups.

- Tracks accountability by linking every action to a specific IAM role or service.

- Flags cross-account or unapproved prefix access, improving security.

- Enables automation by supporting recurring queries and reports.

Automation touchpoint example

Automation can help keep your compliance workflow consistent and efficient by reducing manual effort and ensuring timely checks. A few simple scheduled jobs can verify data integrity, surface anomalies, and generate audit-ready evidence without daily intervention. Here’s a sample workflow:

Daily task:

- Verify that new S3 log files have arrived as expected.

- Run saved queries for top requesters and unauthorized prefix access.

- Flag anomalies and automatically open investigation tickets.

Monthly task:

- Compile screenshots, CSV samples, and short narratives from verified results.

- Assemble them into a single PDF evidence packet.

- Store and tag the packet in the evidence workspace for QBRs and audits.

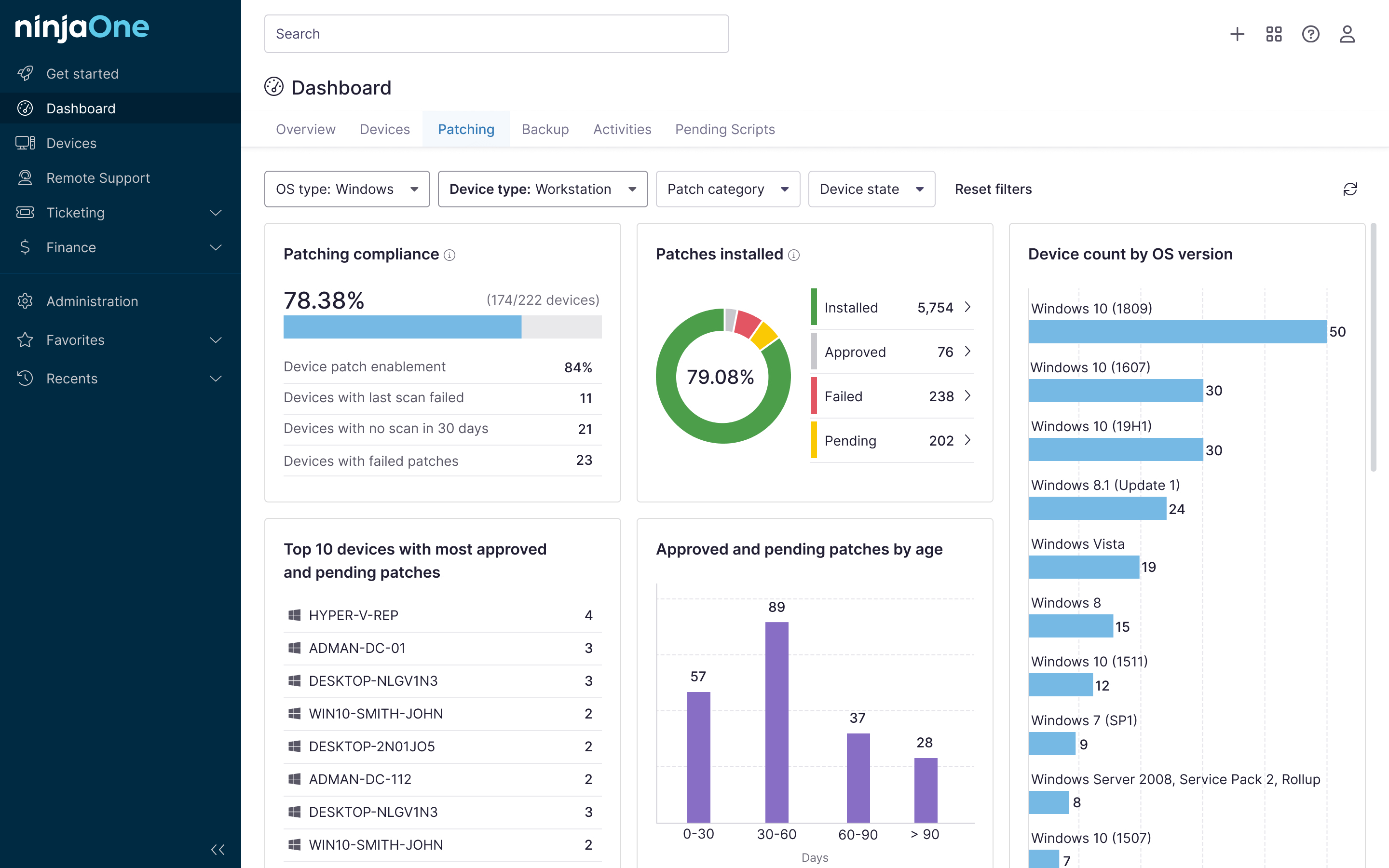

NinjaOne integration

MSPs and IT teams can integrate this workflow with NinjaOne to streamline evidence collection and reporting. With scheduled tasks and tagging, you can save time and ensure consistency across tenants.

| Action | Purpose | Outcome |

| Scheduled tasks for CSV and screenshot collection | Automate evidence gathering. | Reduces manual effort and ensures timeliness |

| Tag artifacts by tenant and bucket. | Keep data organized and traceable. | Simplifies searches and reporting |

| Store results in the evidence workspace. | Maintain centralized audit documentation. | Ensures consistent record retention |

| Attach the monthly packet to the client documentation. | Include compliance proof in QBRs and reports. | Enhances transparency and client confidence |

Turning S3 logs into proof of compliance

S3 server access logs offer verifiable evidence of responsible data stewardship. MSPs and IT admins can easily turn technical telemetry into clear proof of compliance and control by simply following the steps mentioned above. Paired with automation, the process can lead to faster reviews and a defensible record that shows active monitoring and good management.

Related topics: