Key points

- Define the Threat: Data poisoning is an AI security attack where malicious actors manipulate training or fine-tuning data to corrupt machine learning model behavior.

- Recognize Common Attack Types: Data poisoning attacks include backdoor (label) poisoning, availability attacks, and stealth data manipulation.

- Account for Modern AI Systems: LLMs and AI systems face expanded poisoning risks from prompt injection, poisoned fine-tuning data and membership inference.

- Understand Business Impact: Data poisoning can lead to financial loss, compliance failures, operational disruption, and loss of customer trust.

- Apply Secure AI Defenses: Effective data poisoning prevention requires anomaly detection, secure training environments, and continuous AI model monitoring.

- Plan for the Future: AI security strategies should follow a secure-by-design lifecycle supported by AI governance frameworks and ongoing risk assessment.

As you integrate artificial intelligence (AI) and machine learning (ML) technologies into your business operations, you’ll notice the sophistication of cyber threats against these systems rising. Among these emerging threats, data poisoning stands out due to its potential to manipulate and undermine the integrity of your AI-driven systems.

For this reason, understanding and mitigating data poisoning risks will help you maintain the security and reliability of your AI and ML applications.

What is data poisoning?

Data poisoning is a form of cyberattack where a malicious actor manipulates training datasets. This intentionally skews the results to

- sabotage your system operations,

- introduce vulnerabilities, or

- influence the model’s predictive or decision-making capabilities.

How data poisoning attacks work

Data poisoning attacks exploit the large, diverse training data of your AI and ML models by inserting false or misleading data, which can significantly alter their behavior.

This manipulation can be subtle, such as slightly altering the data inputs to degrade your model’s performance over time, or it can be more direct and destructive, aimed at causing immediate and noticeable disruptions.

Cybercriminals use various tactics to introduce errors into AI models, leading to compromised decision-making processes. Here are some data poisoning attack examples:

- Backdoor (label) poisoning: Injects data into the training set to create a “backdoor,” allowing attackers to manipulate the model’s output when specific conditions are met. The attack can be targeted, where the attacker tries to make the model produce a certain behavior, or non-targeted, which generally disrupts the model’s overall functionality.

- Availability attack: Disrupts the availability of systems or services by degrading performance or functionality, such as inducing system crashes or generating false positives or negatives.

- Stealth attack: Involves gradually altering the training dataset or injecting harmful data stealthily to avoid detection, leading to subtle yet impactful biases in the model over time.

Along with these data poisoning attack examples, attackers use many more tactics to exploit AI systems, such as

- membership inference,

- model extraction, and

- prompt injection (for LLMs),

making it crucial to incorporate security at every stage of AI development.

The impact of data poisoning on businesses and IT infrastructures

Consider this scenario. Your company in the financial sector uses AI models for fraud detection. An attacker introduces anomalies or incorrect labels into the training data that cause the model to classify fraudulent transactions as legitimate. This allows them to siphon off funds undetected, resulting in significant financial losses.

In this case, the implications of data poisoning extend beyond operational disruptions; they pose significant risks to the security, reputation, and financial health of your business.

If you often deploy AI solutions for operational efficiency and threat detection, you may find yourself particularly vulnerable. A successful data poisoning attack can

- compromise your insights,

- lead to flawed decision-making, and

- expose your business to severe security breaches and compliance risks.

Moreover, the subtle nature of such attacks can allow them to go undetected for long periods, causing cumulative damage that is complex and costly to undo once discovered. This extended impact can also lead to lost customer trust as well as devastating legal and financial repercussions for your organization.

Strategies and defenses for data poisoning attacks

Bolstering your defenses for data poisoning attacks requires a multifaceted approach centered on robust security protocols and vigilant management of your AI models. Fortify your defenses against data poisoning attacks with the following key strategies.

Enhanced data validation and filtering

Implement rigorous checks to validate and filter input data using advanced algorithms to analyze the incoming data for inconsistencies, anomalies, or patterns that deviate from established norms.

Techniques such as statistical analysis, anomaly detection algorithms, and machine learning models can be employed to automatically flag and review suspicious data. This helps ensure you use only clean, verified data for training AI models.

Other concrete data tracking and validation measures include the following:

- Dataset versioning and lineage tracking both employ the practice of tracking and documenting changes made to data over time for better traceability.

- Data provenance verification involves reviewing a dataset’s complete history prior to AI model integration to confirm whether such data is trustworthy or safe to use. This is also helpful for periodic audits and compliance checks.

- Model cards and dataset cards, much like nutrition labels on food packaging, provide detailed information on the intended uses and limitations of AI models and datasets. Such transparency ensures reduced bias and the adoption of more thoughtful and strategic data verification practices.

Secure model training environments

Establish secure environments for AI training to shield the data and models from external threats. This includes using virtual private networks (VPNs), firewalls, and encrypted data storage solutions to create a controlled and monitored environment.

Tightly regulate access to these environments through role-based access controls (RBAC) so that only authorized personnel can interact with the AI systems and training data.

Continuous model monitoring

Continuously monitor AI models to track their performance and outputs as well as detect any unusual behavior that may indicate a data poisoning attack. This can be achieved through real-time performance dashboards and alert systems that notify the team when predefined thresholds are breached.

Additionally, perform regular audits and updates to the model’s decision-making processes to adapt to new threats and changes in the operational environment.

Diverse data sources

Use data from multiple reliable sources to reduce the impact of any single source being compromised. Integrating data from varied sources not only enriches the training set but also introduces redundancy that can safeguard against targeted data manipulation. Periodically review and vet sources to maintain the reliability and integrity of the data pool.

Future trends and precautions in AI security against data poisoning

As technology evolves, so do the tactics of cyber attackers. Future developments in AI and ML are likely to introduce new forms of data poisoning, necessitating even more sophisticated countermeasures. Anticipating these changes, businesses must remain proactive and adopt the latest security technologies and practices to ensure adequate defenses for data poisoning attacks. These include

- automated ML security evaluation pipelines,

- red-teaming (a.k.a. attack simulations) for AI models,

- a secure-by-design AI lifecycle, and

- adherence to AI governance frameworks.

Compromised data is invisible until damage is done. Watch the video “Data poisoning” and be prepared for AI security threats.

Reduce your risk of cyber threats with proactive AI security measures

Overall, data poisoning is a potent and evolving threat to your artificial intelligence and machine learning systems that shouldn’t be overlooked. Understanding the nature of these attacks, implementing a robust security framework, staying informed of the latest trends in AI security, and adopting proactive measures will help you safeguard your digital assets and maintain the integrity of your AI-driven operations.

It’s equally imperative for security teams and IT leaders to not only focus on current threats but also anticipate future vulnerabilities. Staying ahead of cyber threats isn’t just about protection; it’s also about ensuring sustainability and trust.

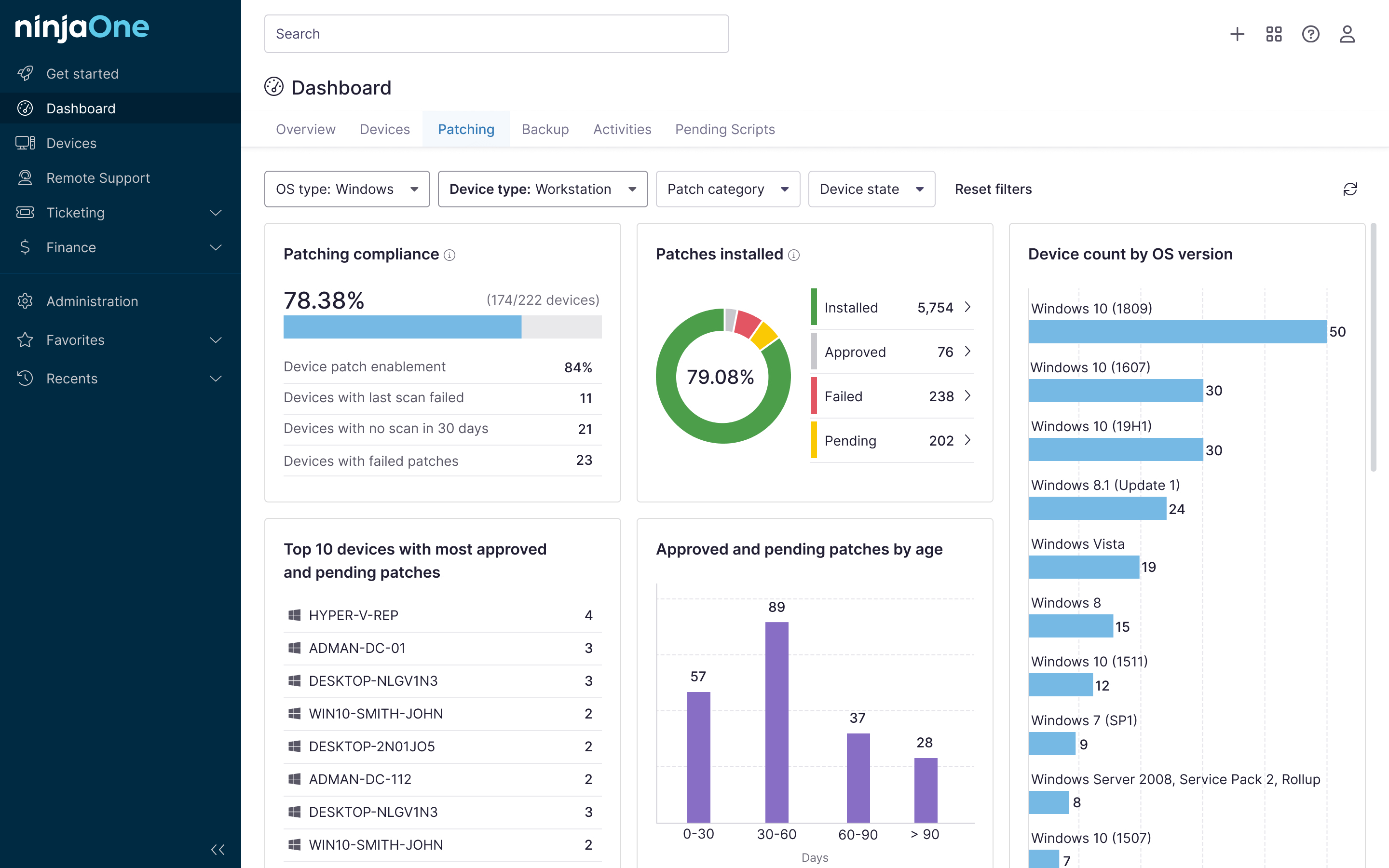

The types of cyber threats your organization might encounter are diverse and constantly evolving, but with the right approach, you can significantly reduce your risk of encountering such attacks. It also helps to have an RMM and IT management solution, such as NinjaOne, that allows you to set the foundation for a strong IT security stance. With automated patch management, secure backups, and complete visibility into your IT infrastructure, NinjaOne RMM helps you protect your business from the start.

As well, NinjaOne Backup not only ensures fast and reliable data backup and recovery in the event of a data-poisoning attack but also provides file- and folder-level endpoint protection, cross-platform support (Windows and macOS), and flexible storage options—whether your IT network is on-premises, cloud, or hybrid.

Get a glimpse of NinjaOne’s backup solution in action by watching a free demo or start your 14-day free trial of the software today.