Key Points

- Data anonymization makes re-identification impossible, while pseudonymization allows controlled reversal for operational use.

- Effective data de-identification begins with classifying datasets, identifying direct and quasi-identifiers, and aligning privacy techniques to business needs.

- Apply privacy models (e.g., k-anonymity, generalization, and suppression) to reduce linkability; perform ongoing utility checks to maintain analytical accuracy.

- Secure cryptographic keys, transformation pipelines, and documentation to prevent unauthorized re-identification and ensure audit-ready compliance.

- Use automation to scan datasets, assign privacy profiles, validate de-identification outputs, and generate evidentiary artifacts for review.

- NinjaOne’s policy automation, documentation, monitoring, and data protection tools support scalable, compliant de-identification operations.

Protecting customer data privacy and earning trust takes more than hiding personally identifiable information (PII). Quasi-identifiers, such as birthdate, occupation, or medical diagnosis, when combined, can still reveal a customer’s identity.

This guide explains the difference between data anonymization vs. pseudonymization, highlighting how they protect privacy, preserve data usability, and support compliance.

Steps for an effective data de-identification strategy

This section outlines strategies to achieve data anonymization, where re-identification is impossible, or pseudonymization, where identifiers are replaced but can be restored under strict control.

Understanding their differences enables you to select the appropriate level of customer data protection that matches your needs without compromising usability.

📌Prerequisites:

- Data inventory showing sensitive fields, quasi-identifiers, and intended use cases

- Defined success criteria for reports, metrics, model features, and privacy thresholds

- A secure workspace for transformations and an evidence repository for decisions and test results

Step #1: Decide if anonymization or pseudonymization fits your needs

Executing a data protection plan without understanding the structure and purpose of your dataset can potentially lead to over-sanitized or non-functional data. Classifying your dataset reveals the existing information, associated risk levels, and the importance of each field to the dataset’s function.

Recommended action plan:

- List your data elements:Inventory your dataset, then separate direct identifiers (e.g., name, email), quasi-identifiers (e.g., ZIP code, age), or sensitive attributes (e.g., health condition, income).

- Define the purpose: Provide the rationale behind the de-identification process to determine how reversible your method can be. Choose between:

- Data anonymization: Suitable if re-identification isn’t required for the dataset.

- Pseudonymization: Better if re-linking under strict controls is necessary (e.g., customer lookups, support logs).

- Identify users and tasks: Specify who has access to the dataset and their intended goal, such as trend analysis or auditing. This ensures that you don’t accidentally remove critical data signals on which these tasks depend.

Step #2: Build a workflow to match your data de-identification method

Crafting a workflow that aligns with your chosen security technique right-sizes your data protection methods to risks and operational needs. This prevents excessive data loss or privacy gaps, as strong methods can cause over-anonymization, while weaker ones can pose re-identification risks.

Recommended action plan:

- Align technique to use case:Strike a balance between privacy and utility.

- For high-privacy datasets, apply data anonymization techniques, such as generalization, suppression of high-risk values, or adding noise to reduce re-identification risk.

- For internal datasets, apply pseudonymization. Consider using tokenization, consistent hashing, or applying masking to high-risk fields, such as email addresses or account IDs.

- Balance utility and privacy: Every method reduces risk at the cost of data accuracy or detail. Note acceptable tradeoff levels; for instance, you can truncate ZIP codes to 3 digits, reducing precision but improving customer anonymity.

- Standardize de-identification procedures: Use consistent rules and parameters when transforming data to maintain reliability and streamline reporting.

Step #3: Apply privacy models on anonymized or pseudonymized data

Even without direct identifiers, individuals can still be identified by linking existing quasi-identifiers across multiple datasets. By using different privacy models, you’re offering customers a structured way to minimize the possibility of deanonymization.

Recommended action plan:

- Generalize quasi-identifiers: Utilize k-anonymity techniques to homogenize customer data and ensure data privacy. For instance, grouping ages to 5-year ranges or merging ZIP codes within broader regions can reduce the risk of re-identification.

- Handle outliers and small groups: Identify and suppress rare values, such as unique job titles or specific locations, that can make individuals stand out. If suppression causes excessive data loss, consider coarsening values by changing specific titles, such as “cardiologist” to “healthcare professional.”

- Record threshold and impact: Capture privacy models used to anonymize or pseudomize data, then quantify how much data is affected by suppression or generalization. This leaves an audit trail that allows you to clearly explain any residual risk to clients.

Step #4: Preserve utility after data anonymization or pseudonymization

Effective customer data protection shouldn’t only anonymize or pseudonymize a dataset; it should also preserve the data’s intended purpose. That said, it’s important to verify that your chosen de-identification strategy maintains data privacy without breaking utility.

Recommended action plan:

- Run key reports and comparisons: Verify if key metrics remain consistent after transformation. For instance, if the average customer age shifts slightly (from 38.4 to 37.9), usability is preserved. However, large changes in data averages or missing regions can indicate over-anonymization.

- Adjust your de-identification strategy: If important metrics or relationships break across datasets, adjust your technique parameters. Consider using a coarser generalization, a smaller k-value, or a lighter masking rule to balance risk reduction with data accuracy.

Step #5: Secure keys and verify anonymization immutability

Even after successfully implementing a specific de-identification strategy, attackers can still exploit pipeline vulnerabilities or exposed encryption keys. Without an ample pipeline or a robust cryptographic key protection strategy, you risk the re-identification of customer records.

Recommended action plan:

- Separate keys and lookup tables: If using tokenization or reversible masking, store keys or lookup tables in different access-restricted locations separate from the main dataset. This prevents anyone with access to one system from easily reversing your de-identification strategy.

- Apply strict access controls: Limit who can de-tokenize or re-identify datasets, and for what purpose. Log every instance of de-tokenization or re-identification, including who did the job, the completion date, and why it occurred.

- Clearly document irreversible methods: If you’re using irreversible anonymization methods, such as noise addition or one-way hashing, note that re-identification is technically impossible. Additionally, capture the steps taken to prevent linkage from external data sources to prove that your de-identification strategy is air-tight.

Step #6: Deliver evidence packets to clients and conduct regular reviews

An evidence packet captures your decisions, parameters, and so that auditors and teams can easily understand and repeat your de-identification process. Reviews ensure that your privacy controls remain aligned with client needs, preventing your strategy from drifting out of compliance.

Recommended action plan:

- Build a compact evidence packet: Keep evidence packets concise, proving due diligence to readers without overwhelming them. Include original dataset, fields transformed, technique parameters, residual risk summary, test results, and next review date.

- Store in a secure repository: Save your evidence packet in a location accessible only to authorized personnel responsible for privacy or compliance.

- Set a review cadence: Revisit your evidence packet quarterly or whenever changes are made to the dataset, such as the addition of new columns or users. During each review, confirm if your de-identification strategy still meets privacy and usage expectations.

- Improve your strategy: Update your evidence packet after every review cycle. This turns it into a living record of lessons learned, new tools, or privacy requirements, so it matures as your client’s needs expand.

Data de-identification automation touchpoint example

Automation minimizes manual technician workflow when anonymizing or pseudonymizing data, saving technician time and effort while facilitating process standardization.

Sample automations you can leverage to support your de-identification strategy:

- Create an automated job that scans new or incoming datasets before processing.

- Detect and flag direct and quasi–identifiers that could enable re-identification (e.g., names, IDs, ZIP codes, or dates).

- Apply a specific privacy profile to datasets based on their respective privacy classifications.

- Deploy a small set of utility checks to confirm if transformed data still serves its intended purpose.

- Generate a one-page summary showing applied privacy parameters, any data changes, and notes on residual risk.

- Automatically export summaries to your evidence repository for audit tracking and reviews.

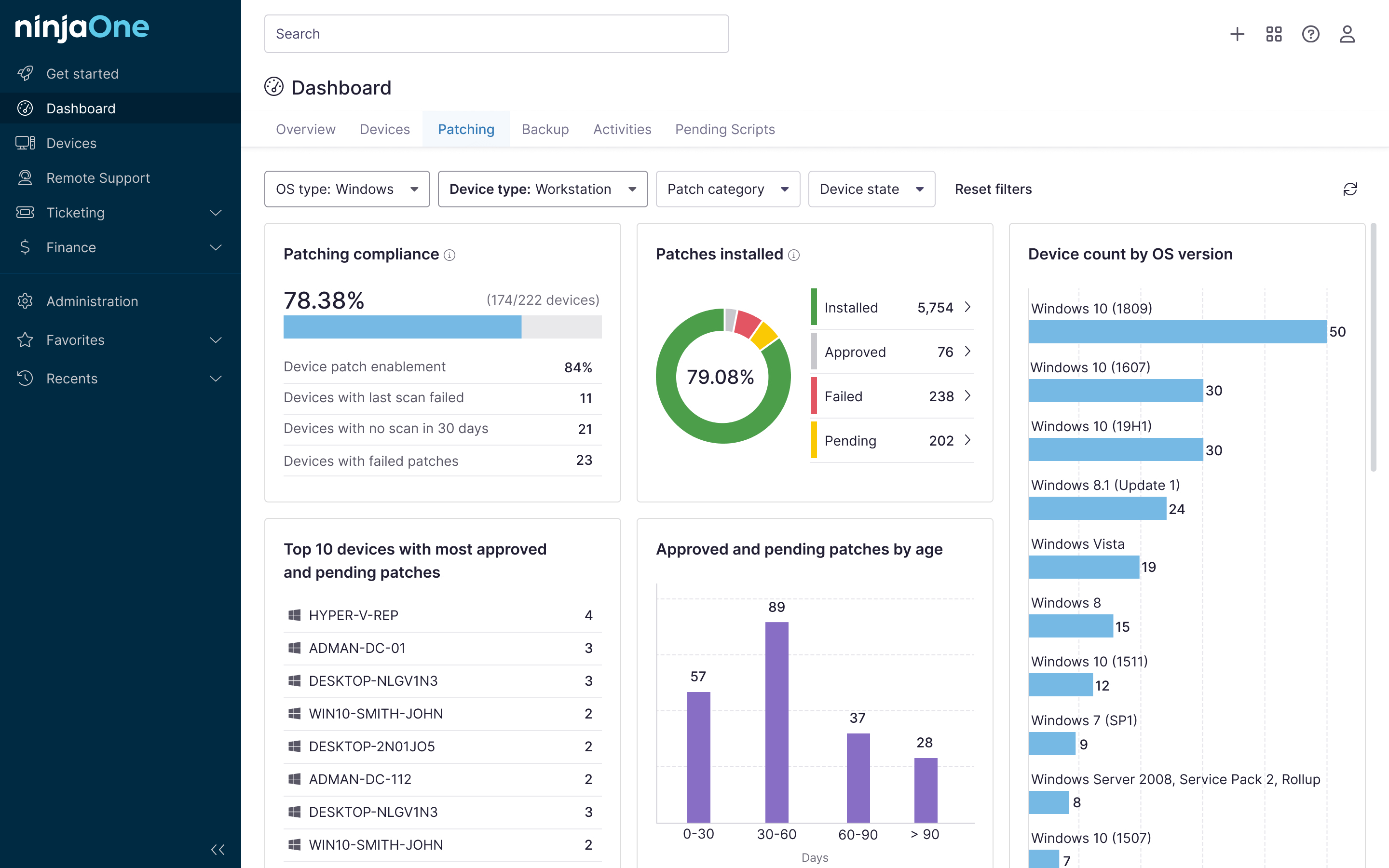

NinjaOne services for data anonymization or pseudonymization

By combining NinjaOne’s documentation, alerting, policy-driven automation, and quick recovery capabilities, you can maintain consistent privacy controls that align with client needs.

- Policy automation: Leverage NinjaOne’s policy-based automation to streamline threshold updates and utility test executions when data schemas or functions change.

- Custom scripting: Deploy custom scripts that orchestrate anonymization and pseudonymization workflows, validate data utility metrics, and flag potential re-identification risks consistently across environments.

- Documentation tool: Store transformation runbooks, profiles, and evidence packets within a centralized repository, allowing easy access and streamlined knowledge transfer.

- Data protection software: Create backups of your original datasets for easy rollback in case transformed data becomes oversanitized or too disparate from its intended function.

- Remote monitoring and alerting: Create custom alerts to automate review reminders, ensuring that transformed datasets are regularly checked within your specified review cadence.

Protect customer data using de-identification strategies

Choosing the right de-identification strategy helps you maintain customer privacy while preserving data utility. By classifying data, applying the right privacy model, and addressing quasi-identifiers, teams can right-size the amount of protection needed to preserve data function.

Consistent validation and secure documentation ensure privacy measures don’t weaken client security or compliance posture. Leverage NinjaOne to reduce manual technician workflows and standardize de-identification workflows, ensuring they become repeatable at scale.

Related topics: